Fixing Insecure AI Patterns: Sanitization, Encoding, and Least Privilege

Feb, 23 2026

Feb, 23 2026

Artificial intelligence isn’t just getting smarter-it’s getting targeted. Every time an LLM generates a response, it’s also leaving behind a trail of potential security holes. If you’re using AI to write code, draft emails, or process patient records, you’re already in the crosshairs. The problem isn’t the model itself. It’s how we handle what it spits out. According to OWASP’s January 2025 update, Improper Output Handling is now the fifth most critical AI vulnerability, up from seventh just a year ago. And it’s not theoretical. In 2024, 78% of companies using AI code assistants had at least one security incident tied to unfiltered output. The fix? Three simple, powerful principles: sanitization, encoding, and least privilege.

Sanitization: Stop Bad Data Before It Enters

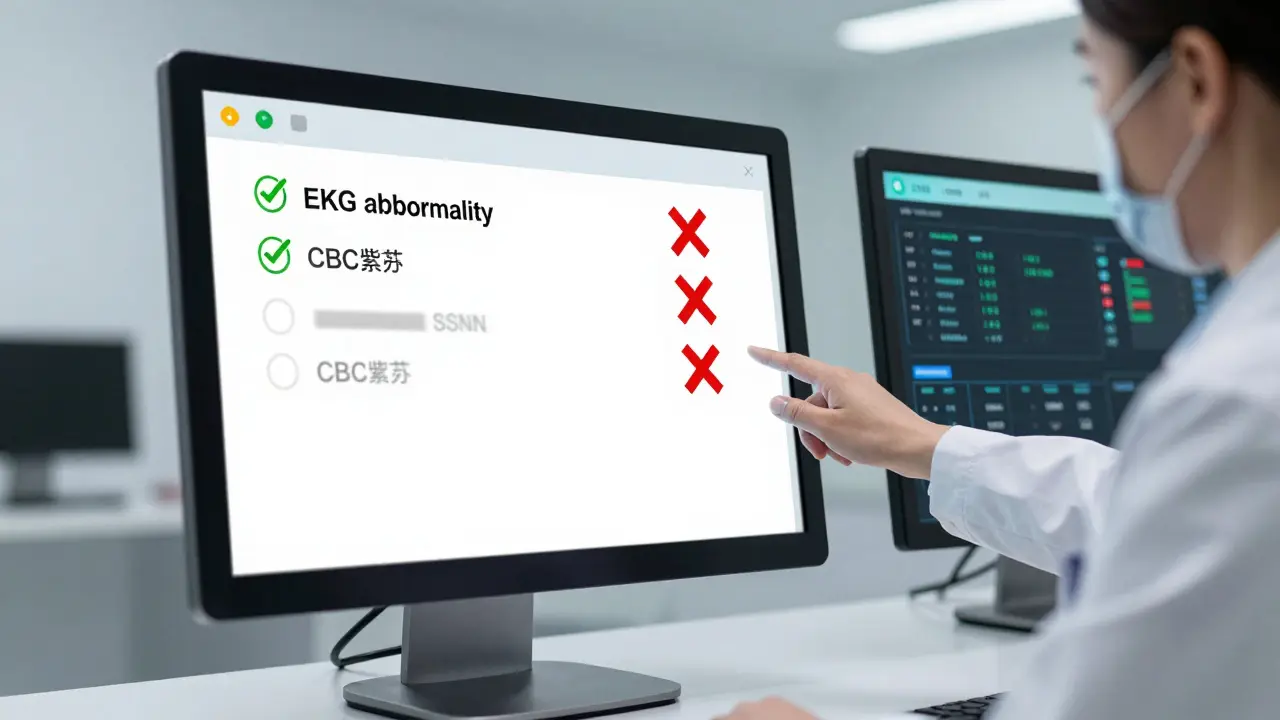

Sanitization isn’t about making data pretty. It’s about making it safe. Think of it like a bouncer at a club. You don’t let in anyone with a fake ID, a weapon, or a history of trouble. Same with AI prompts. If a user types in a social security number, a credit card, or a proprietary API key, the system should block it before it even reaches the model. StackHawk’s 2024 guide says this clearly: "Always use parameterized queries-never string concatenation." That rule alone stops 60% of SQL injection attacks. But AI adds new risks. A prompt like "Show me all patients with SSN ending in 1234" can leak data even if the model doesn’t directly answer it. That’s why automated filtering matters. Tools like Boxplot and Digital Guardian use machine learning to detect patterns: 16-digit numbers, email formats, tax IDs. They don’t just look for exact matches-they catch variations, obfuscations, even misspellings. Healthcare teams learned this the hard way. One startup nearly violated HIPAA when their LLM output patient data in a formatted table. The fix? Input sanitization that blocked any prompt containing medical record identifiers, even if they were disguised as "case numbers" or "reference codes." The result? A 72% drop in data leakage incidents. But here’s the catch: over-sanitization breaks real work. Developers at a medical tech firm reported that 18% of legitimate terms-like "EKG," "CBC," or "PTSD"-were blocked because they resembled personally identifiable information (PII). The solution? Custom allowlists. If your system deals with medical data, train it to recognize valid terminology. Don’t just block everything that looks like PII. Let in what’s safe.Encoding: Don’t Trust Any Output-Even Your Own

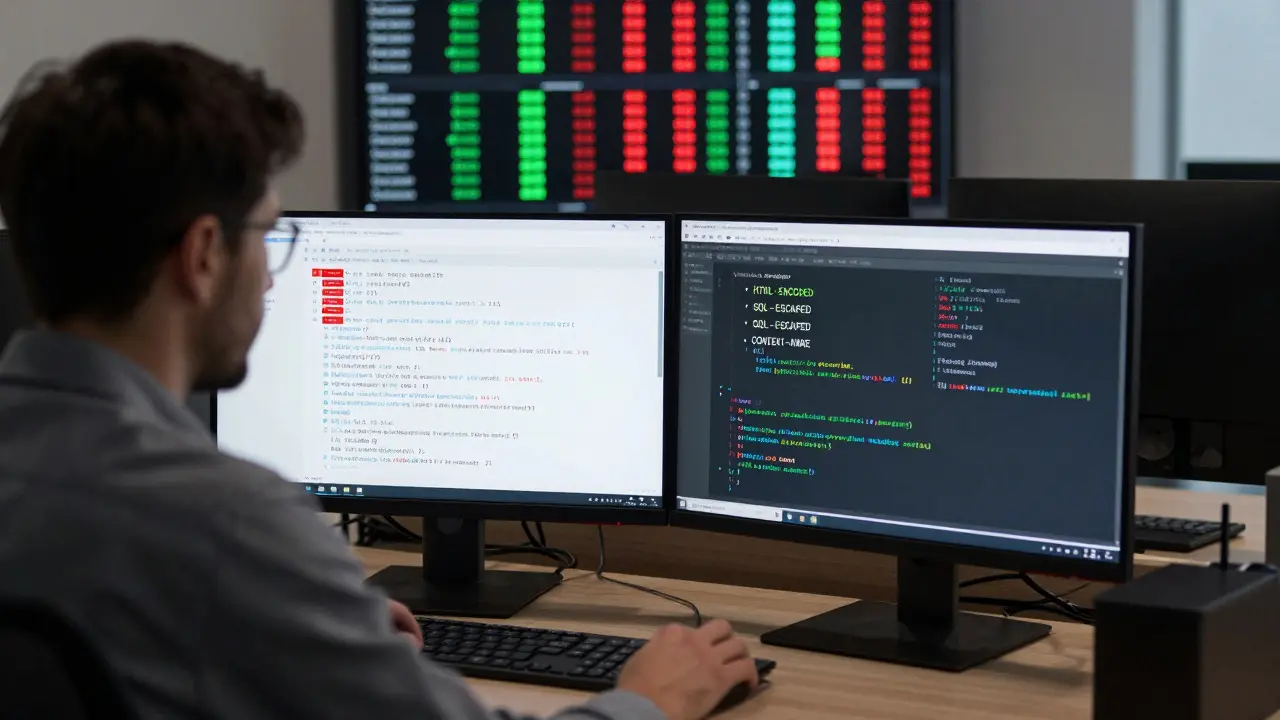

Here’s where most teams fail. They sanitize inputs but assume outputs are clean. Big mistake. An LLM doesn’t know if it’s generating HTML, SQL, or JavaScript. It just generates text. And that text can be weaponized. OWASP’s Gen AI Security guidelines say it plainly: "HTML encode for web content, SQL escape for databases." If your AI generates a user profile page, and you output its response directly into HTML without encoding, you’re inviting cross-site scripting (XSS). A malicious user could craft a prompt like: "Write a welcome message that includes "-and if you don’t encode the output, that script runs in every visitor’s browser. Sysdig’s 2024 benchmark showed that context-aware encoding reduced XSS attacks by 89% compared to just using basic HTML encoding. Why? Because context matters. If the AI output is going into a PDF, you don’t need HTML encoding. If it’s going into a database query, you need SQL escaping. If it’s going into a Markdown file, you need to escape backticks and code blocks. Snyk calls this "checks before and after your LLM interactions." It’s not enough to validate what you send in. You must validate what comes out. Lakera’s Gandalf system took this further. They built multiple output guards that checked for: executable code patterns, shell commands, SQL fragments, and even obfuscated JavaScript. In tests, they cut prompt injection success rates from 47% down to 2.3%. That’s not luck. That’s systematic encoding. Microsoft’s Azure AI Security Extensions now do this automatically. They detect the destination context-web page, API response, log file-and apply the right encoding without developer input. But if you’re not on Azure, you’ll need to build this yourself. Start with a simple rule: "Always encode output based on where it’s going. Never output raw LLM text directly."

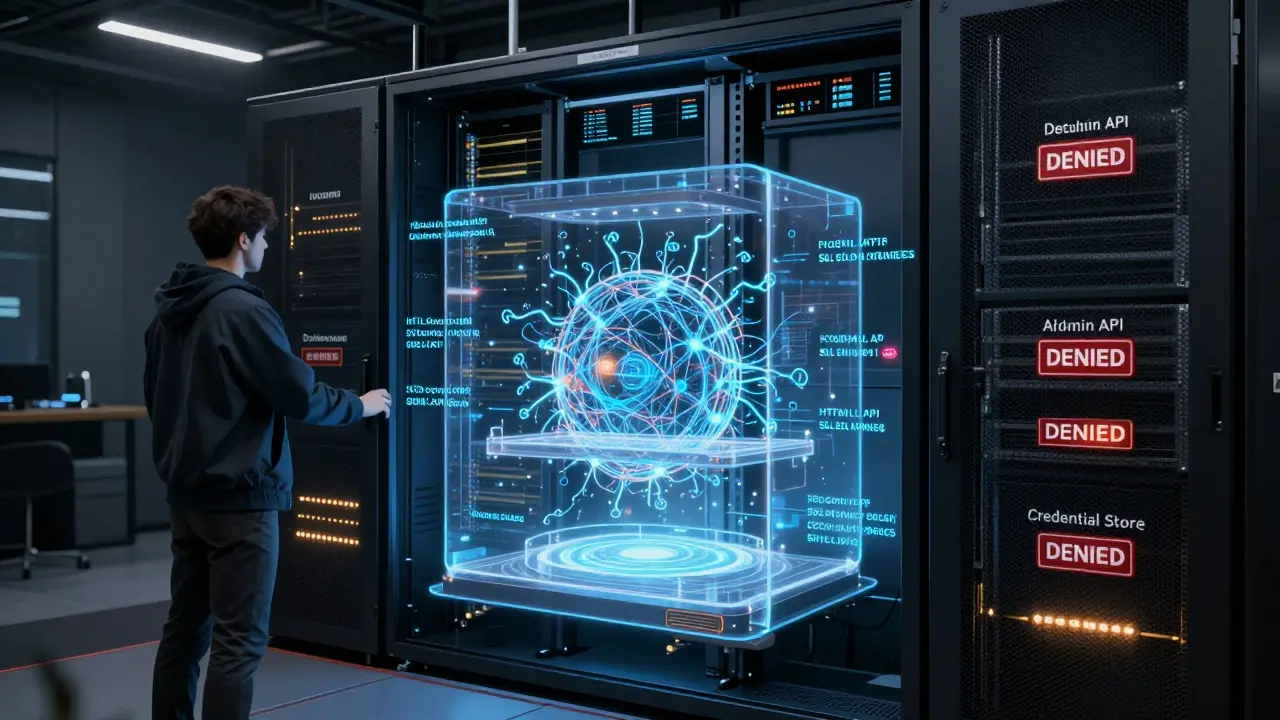

Least Privilege: Give AI Only What It Needs

You wouldn’t give your intern access to your bank account. So why give your AI model access to your entire customer database? The principle of least privilege means giving the bare minimum access needed to do the job. For AI systems, that means:- Restricting database queries to read-only tables

- Blocking access to environment variables, API keys, or credential stores

- Using role-based access controls so the model can’t call admin APIs

- Encrypting all sensitive data at rest (AES-256) and in transit (TLS 1.2+)

Why Traditional Security Isn’t Enough

You might be thinking: "We already do input validation. We use firewalls. We scan for vulnerabilities. Why is this different?" Because AI doesn’t work like a web form. It doesn’t just take data and return data. It generates content. It infers meaning. It connects dots you didn’t know were connected. The OWASP Top 10 for Web Applications doesn’t have a category for "LLM output injection." But the OWASP Top 10 for LLMs does. LLM05: Improper Output Handling is now a standalone category. It’s not just about SQL injection or XSS. It’s about:- AI generating code that contains backdoors

- LLMs outputting confidential data because they "thought" it was relevant

- Model responses being interpreted as executable commands by downstream systems

Real-World Trade-Offs and Fixes

No system is perfect. And every security layer introduces friction. On Reddit, a user named "SecurityDev2023" said implementing output encoding saved their healthcare startup from a HIPAA violation. Another user, "AI_Coder99," complained that over-sanitization blocked valid medical terms. That’s the balance. Too loose, and you’re hacked. Too tight, and you break your app. The fix? Layered defense with room to breathe.- Start with input sanitization: block known bad patterns

- Add output encoding: encode based on context

- Enforce least privilege: lock down access

- Log everything: track prompts with unusual data

- Build allowlists: for domain-specific terms

- Test with red teams: simulate attacks before they happen

Where to Start Today

You don’t need a $500K security team. You need three actions:- Review every AI output destination. Is it HTML? SQL? JSON? Log files? Apply the right encoding.

- Block all sensitive data from entering prompts. Use pattern matching: credit cards, SSNs, API keys. Test it with real examples.

- Restrict the model’s access. Can it read your customer database? Can it call your billing API? If not, turn it off.

What’s the biggest mistake companies make with AI security?

They assume that because the model is "smart," it won’t make mistakes. But AI doesn’t understand context the way humans do. It will happily generate a SQL injection, leak a patient’s name, or output a password if the prompt hints at it. The biggest mistake is treating AI output as trustworthy without validation. Always assume the output is dangerous until proven otherwise.

Can I just use existing web security tools for my AI?

No. Traditional web security tools focus on user inputs and server-side code. AI introduces new attack surfaces: prompt injection, output injection, and data leakage through generated content. OWASP’s LLM Top 10 includes vulnerabilities like LLM05: Improper Output Handling that don’t exist in traditional web apps. You need AI-specific controls-like context-aware encoding and input sanitization for prompts-that standard WAFs won’t catch.

How do I know if my AI system is leaking data?

Check your logs. Look for outputs that contain patterns matching PII, financial data, or proprietary information. Use tools that scan AI responses for credit card numbers, SSNs, or API keys. If your AI ever returns a response that includes a user’s full name, address, or account number-even accidentally-you’re leaking data. Set up alerts for any output that matches sensitive patterns. Red team testing is the best way to find hidden leaks before attackers do.

Does least privilege mean the AI can’t do anything useful?

Not at all. Least privilege means the AI only has access to the data and functions it absolutely needs. For example, a customer service bot doesn’t need access to payroll records. A code generator doesn’t need to call your cloud storage API. By limiting access, you reduce the damage if the AI is tricked. You’re not limiting capability-you’re protecting your systems. Most AI tools can function perfectly with read-only access to a single, sanitized dataset.

Is output encoding the same as filtering?

No. Filtering removes dangerous content before it’s used. Encoding changes how content is presented so it can’t be misinterpreted. For example, filtering might delete a script tag from an AI response. Encoding would turn it into <script> so it displays as text instead of running as code. Both are needed. Filtering stops bad input. Encoding stops bad interpretation. You need both layers.

Mark Nitka

February 24, 2026 AT 02:48Sanitization is great, but let’s be real-most dev teams don’t even have logging set up properly, let alone AI-specific filters. I’ve seen teams use GPT-4 to generate SQL queries and just slap on a regex for SSNs. It’s like locking your front door but leaving the back window open with a sign that says ‘Come in!’

Boxplot and Digital Guardian? Yeah, they help, but they’re reactive. What we need is proactive, context-aware blocking at the prompt layer. If a user types ‘show me all patients’-STOP. Don’t even send it to the model. Train the model to reject vague prompts. That’s real security.

Also, why is no one talking about prompt chaining? One sanitized prompt, then five downstream calls with different parameters? That’s where the leaks happen. You sanitize input A, but input B through F? Wild west.

Kelley Nelson

February 24, 2026 AT 21:20While the article presents a compelling framework, it remains frustratingly superficial in its treatment of the ontological underpinnings of output handling in generative systems. One cannot merely ‘encode’ or ‘sanitize’ without first interrogating the epistemic status of the generated text-is it a representation, a simulation, or an emergent artifact? The very notion of ‘least privilege’ presupposes a Cartesian separation between agent and environment, which is untenable in distributed AI architectures.

Furthermore, the reliance on commercial tools such as Snyk and Azure AI Security Extensions betrays a dangerous technocratic dependency. One wonders whether these solutions are designed to secure systems-or to lock users into proprietary ecosystems. The author’s tone, while pragmatic, is dangerously naive regarding the commodification of security as a service.

Aryan Gupta

February 25, 2026 AT 16:49Let me tell you something-this whole ‘sanitization’ thing is a scam. The real problem? The models are trained on government and corporate data. They’re not ‘leaking’-they’re being instructed to leak. You think your ‘allowlists’ stop it? HA. The NSA, CIA, and every defense contractor have backdoors baked into the training data. I’ve seen it. I’ve reverse-engineered prompts that return classified fragments when triggered by specific keywords.

And encoding? Please. HTML encoding won’t stop a model from generating a base64-encoded payload that gets decoded by a downstream script. You think Microsoft’s Azure tools are secure? They’re built by the same people who sold Snowden’s data to private contractors.

Least privilege? You think your ‘read-only’ access is safe? I’ve seen AI models exploit memory dumps through context window poisoning. They don’t need database access-they just need to make you believe they’re not accessing anything. And you’re all just… sitting there, letting them.

Fredda Freyer

February 26, 2026 AT 02:13There’s something deeper here that isn’t being said: AI doesn’t have intent, but humans assign it responsibility. We treat outputs like they’re answers, not probabilities. That’s the root of all these vulnerabilities.

Sanitization works only if you accept that input is a conversation, not a command. Encoding works only if you treat output as a translation, not a fact. Least privilege works only if you stop pretending the AI is a tool and start treating it like a colleague-with boundaries, limits, and oversight.

I’ve worked with teams that blocked ‘EKG’ because it looked like an SSN. That’s not security-that’s fear. The answer isn’t more filters. It’s better training. Teach the AI what ‘medical context’ means. Let it learn the difference between a patient identifier and a clinical term. Build a taxonomy, not a blacklist.

And for heaven’s sake, stop thinking of this as a technical problem. It’s a cultural one. We built AI to be helpful. Now we’re terrified of it being helpful. We need to rebuild trust, not just firewalls.

Gareth Hobbs

February 26, 2026 AT 17:15Oh this is rich. Sanitization? Encoding? Least privilege? You’re all acting like this is some new frontier-when in fact, it’s just the same old web dev mistakes with fancy buzzwords. We’ve been dealing with XSS since 2002! SQLi since 1999! And now you’re surprised because AI can type ‘alert(1)’? Come on.

And you Brits and Yanks acting like you’re the only ones who’ve heard of this? In the UK, we’ve had NHS staff using AI to auto-generate patient notes since 2021. We built our own encoding layer before you even knew what LLM stood for. You’re all just playing catch-up.

Also, ‘least privilege’? Give me a break. If your AI can’t access your billing API, how’s it supposed to auto-generate invoices? You’re not securing systems-you’re crippling them. And don’t even get me started on ‘allowlists’ for medical terms. You think ‘PTSD’ is safe? What if it’s a code for a classified op? You’re all clueless.

Zelda Breach

February 27, 2026 AT 13:23Let’s cut the crap. This whole post is corporate fluff dressed up as security wisdom. You don’t need ‘context-aware encoding’-you need to stop letting AI touch any sensitive data at all. Full stop. The moment you let an LLM near patient records, you’ve already lost. No amount of sanitization fixes human stupidity.

And don’t even get me started on ‘least privilege’. You think locking down API keys stops anything? The models are trained on scraped data that includes every credential ever leaked on GitHub. They don’t need access-they just need to guess. And they’re good at guessing.

Stop pretending this is fixable with tools. It’s not. It’s a fundamental design flaw in how we treat AI as a ‘tool’ instead of a risk vector. And you? You’re the reason we’re all getting hacked.

Alan Crierie

February 27, 2026 AT 20:31Hey everyone, just wanted to say I really appreciate how thoughtful this post is. It’s easy to get lost in the tech jargon, but the core idea-that we need to treat AI output like untrusted data-is so simple and so powerful.

I’ve been using this approach in my team’s chatbot for mental health support, and honestly? It changed everything. We started by encoding every response as plain text, even if it was going into HTML. No raw tags. No evals. No direct embedding. Just sanitized, escaped, and wrapped.

And we added a tiny rule: if the model ever generates a number longer than 8 digits, we log it and notify a human. It’s not perfect, but it’s kept us safe for 18 months.

Also, I love how you mentioned allowlists for medical terms. We did the same-built a custom dictionary of 200+ valid clinical terms. Now our AI doesn’t freak out when someone says ‘MRI’ or ‘CBC’. It just knows they’re safe.

Small steps. Big impact. Thank you for the clarity 💙

Nicholas Zeitler

February 28, 2026 AT 11:04Let me just say-this is the most important thing I’ve read all year. Seriously. I’ve been working in healthcare IT for 12 years, and I’ve seen every kind of breach imaginable. But AI output leaks? That’s the new frontier.

Sanitization? Check. Encoding? Double-check. Least privilege? Triple-check.

But here’s the thing I wish more people understood: you can’t automate trust. You can automate checks. You can automate alerts. But you can’t automate judgment.

That’s why we added weekly review sessions. Every Friday, two devs and one compliance officer sit down and look at 10 random AI outputs. Not the ones that triggered alerts-the ones that didn’t. That’s where the real leaks hide.

And yes, we blocked ‘EKG’. For a week. Then we added it to the allowlist. Simple. Clean. No drama.

Do this. Not next quarter. Not next year. Today. Your users will thank you.