Leap Nonprofit AI Hub: Practical AI Tools for Nonprofits

At the heart of this hub is AI for nonprofits, artificial intelligence tools built specifically to help mission-driven organizations scale impact without compromising ethics or compliance. Also known as responsible AI, it’s not about flashy tech—it’s about making tools that work for teams with limited tech staff and tight budgets. Many of the posts here focus on vibe coding, a way for non-developers to build apps using plain language prompts instead of code, letting clinicians, fundraisers, and program managers create custom tools without touching sensitive data. Related to this is LLM ethics, the practice of deploying large language models in ways that avoid bias, protect privacy, and ensure accountability, especially in healthcare and finance. And because data doesn’t stop at borders, AI compliance, following laws like GDPR and the California AI Transparency Act is no longer optional—it’s part of daily operations.

You’ll find guides that cut through the hype: how to reduce AI costs, what security rules non-tech users must follow, and why smaller models often beat bigger ones. No theory without action. No jargon without explanation. Just clear steps for teams that need to do more with less.

What follows are real examples, templates, and hard-won lessons from nonprofits using AI today. No fluff. Just what works.

Open-Weight vs Proprietary AI: Architectural Implications for 2026

Explore the architectural trade-offs between open-weight and proprietary AI models in 2026. Learn how transparency, infrastructure costs, and security impact your system design.

Read MoreVibe Coding Explained: How AI Is Democratizing Software Development in 2026

Discover how vibe coding is democratizing software development in 2026. Learn who can build apps now, compare AI coding with no-code, and avoid common pitfalls.

Read MoreWhen Large Language Models Should Abstain: Designing Safe Non-Answers

Explore how Large Language Models can be designed to safely abstain from answering when uncertain. Learn about Abstention Ability, technical mechanisms like verifiers and thresholds, and why saying 'I don't know' improves AI reliability.

Read MoreOutcome-Driven Development: Managing Requirements in Vibe Coding Projects

Learn how to manage requirements in vibe coding projects using Outcome-Driven Development. Discover strategies for structure, security, and vertical slicing.

Read MoreHybrid Recurrent-Transformer Models: Do They Actually Improve LLMs?

Explore whether hybrid recurrent-transformer designs improve LLMs. We analyze Mamba-Transformer mixes, sequential vs parallel structures, and real-world examples like Hunyuan-TurboS.

Read MoreHR Automation with Generative AI: Job Descriptions, Interview Guides, and Onboarding

Explore how generative AI transforms HR automation for job descriptions, interview guides, and onboarding. Learn about costs, risks, and top tools like Gloat and HireVue.

Read MoreTraining Data Disclosures for Generative AI: New Rules and Strategies for 2026

California's AB 2013 mandates training data disclosures for generative AI. Learn the 12 required data points, strategies to protect trade secrets, and how to comply by 2026.

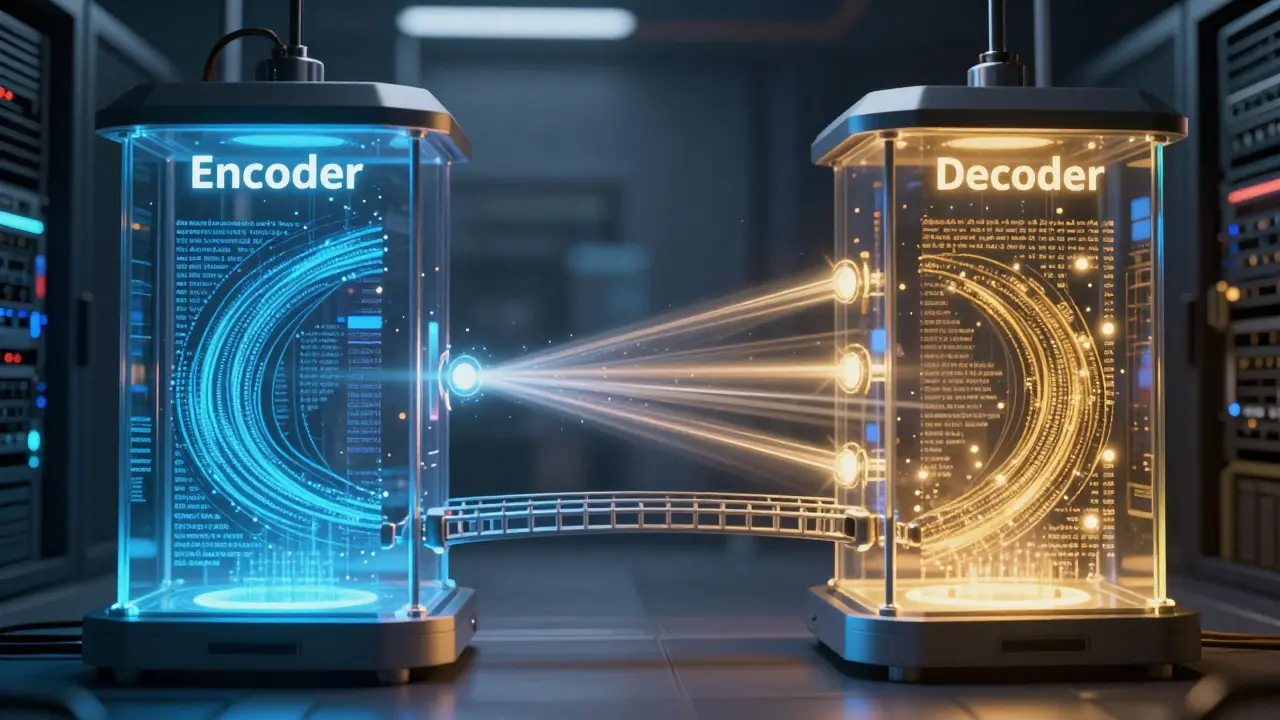

Read MoreCross-Attention in Encoder-Decoder Transformers: How LLMs Handle Conditioning

Explore how cross-attention mechanisms enable encoder-decoder transformers to condition outputs on input contexts. Learn the mechanics, benefits for machine translation, and applications in multimodal AI.

Read MoreTool Use with Large Language Models: Function Calling and External APIs Guide

Learn how function calling enables Large Language Models to use external APIs and tools. Compare GPT, Claude, and Gemini implementations, explore security risks, and get practical tips for building reliable AI agents.

Read MoreTemplate Repos with Pre-Approved Dependencies for Vibe Coding: A Governance Guide

Explore how template repos with pre-approved dependencies govern vibe coding workflows, ensuring security, consistency, and compliance in AI-assisted development.

Read MoreData Extraction Prompts in Generative AI: Structuring Outputs into JSON and Tables

Learn how to structure generative AI prompts for reliable data extraction into JSON and tables. Covers schema design, error handling, and platform comparisons for enterprise workflows.

Read MoreContinuous Documentation: How to Keep READMEs and Diagrams in Sync with Code

Learn how to stop documentation drift by implementing continuous documentation. Discover tools like ReadMe.io, DeepDocs, and Terrastruct to keep READMEs and diagrams perfectly synced with your code.

Read More