Tag: prompt engineering

Data Extraction Prompts in Generative AI: Structuring Outputs into JSON and Tables

Learn how to structure generative AI prompts for reliable data extraction into JSON and tables. Covers schema design, error handling, and platform comparisons for enterprise workflows.

Read MorePrivacy by Design Prompts: How to Instruct AI to Limit Data Collection

Learn how to use Privacy by Design prompts to instruct AI models to limit data collection. Explore practical steps, core principles, and real-world examples to protect your privacy in the age of generative AI.

Read MoreCustomizing LLMs: Fine-Tuning, Adapters, and Prompts Explained

Explore the three main paths for LLM customization: prompting, adapters like LoRA, and fine-tuning. Learn which method fits your budget, compute constraints, and performance goals.

Read MoreLocalization Prompts for Generative AI: Adapting Content Across Regions and Languages

Learn how to craft localization prompts for generative AI to adapt content across regions and languages. Reduce errors, improve cultural relevance, and streamline global campaigns.

Read MoreTask-Specific Prompt Blueprints for Search, Summarization, and Q&A

Learn how to move beyond basic prompting with task-specific blueprints for search, summarization, and Q&A. Boost LLM consistency and accuracy today.

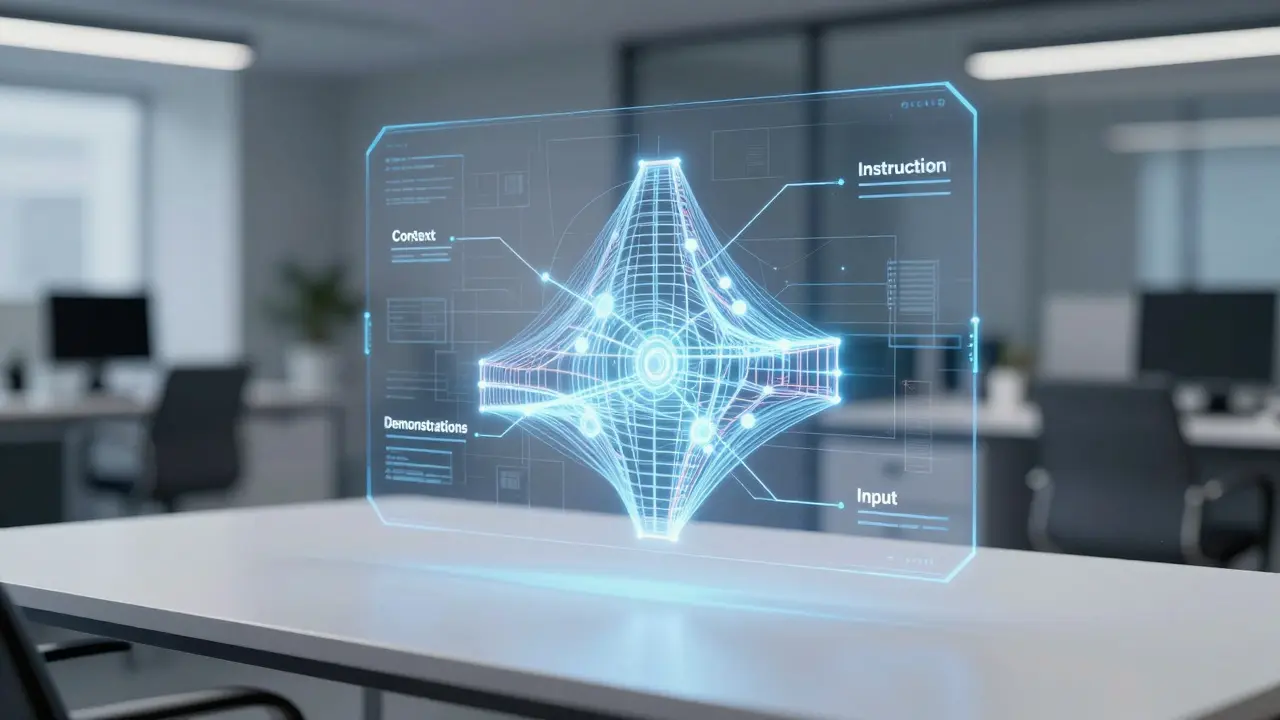

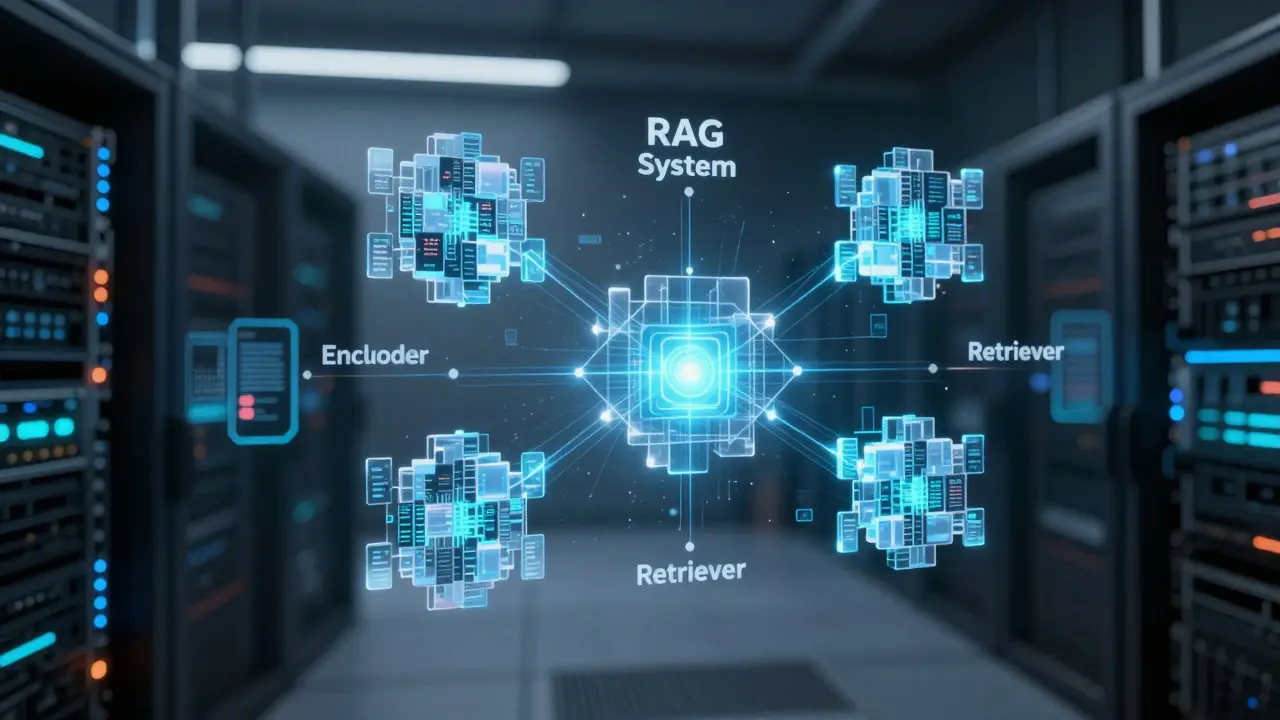

Read MoreScaling AI: Playbooks for RAG, Agents, and Prompt Engineering

Learn how to scale AI systems using professional playbooks for RAG, agentic AI, and prompt engineering. Move from prototypes to reliable production systems.

Read MoreDeterministic Prompts: How to Reduce Variance in LLM Responses

Learn how to reduce variance in LLM responses using deterministic prompts, parameter tuning, and structural anchors to make your AI outputs predictable.

Read MoreNLP Pipelines vs End-to-End LLMs: When to Use Modular Systems vs Prompt-Based Models

NLP pipelines and end-to-end LLMs aren't rivals-they're partners. Learn when to use each, how they compare in cost and accuracy, and why the smartest systems combine both for speed, precision, and scalability.

Read MoreSelf-Ask and Decomposition Prompts for Complex LLM Questions

Self-Ask and decomposition prompting improve LLM accuracy on complex questions by breaking them into visible, verifiable steps. Used in legal, medical, and financial AI, they boost accuracy by up to 14% over standard methods - but require careful implementation.

Read MoreEthical Guidelines for Democratized Vibe Coding at Scale

Vibe coding lets anyone build apps with natural language - but without ethical rules, it risks security, legal trouble, and eroded skills. Here are five proven guidelines to scale it responsibly.

Read MoreChain-of-Thought in Vibe Coding: Why Explanations Before Code Make You a Better Developer

Chain-of-thought prompting forces AI coding assistants to explain their logic before generating code, reducing errors and building real understanding. Learn how this simple technique transforms how developers work with AI.

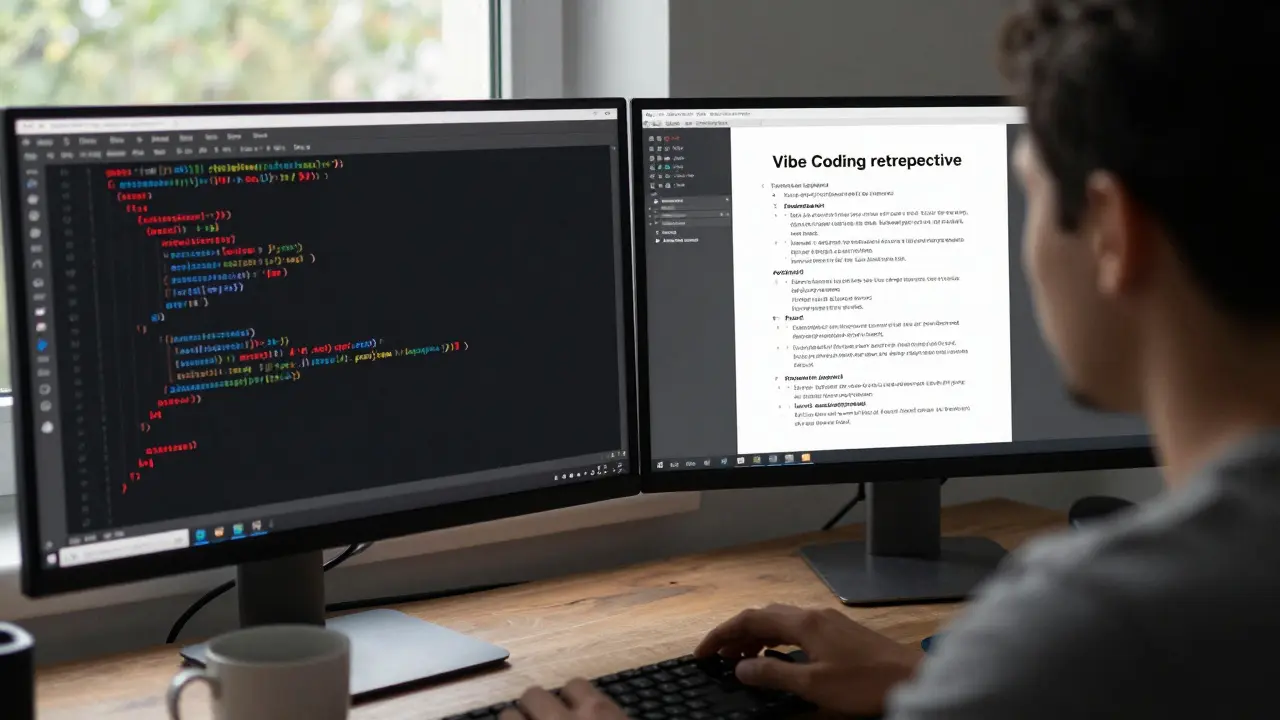

Read MoreRetrospectives for Vibe Coding: How to Learn from AI Output Failures

Learn how to run effective retrospectives for Vibe Coding to turn AI code failures into lasting improvements. Discover the 7-part template, real team examples, and why this is the new standard in AI-assisted development.

Read More- 1

- 2