Why Multimodality Expands Generative AI Capabilities Beyond Text-Only Systems

Mar, 25 2026

Mar, 25 2026

You might think text is enough for artificial intelligence. After all, we read books and write emails every day. But humans don't experience the world just through words. We see a red light, hear a siren, and feel the heat of a stove. When AI relies only on text, it misses half the story. That is why Multimodal AI is a system that integrates and interprets multiple data types including text, images, audio, and video within a single framework. As we move through 2026, the gap between what text-only models can do and what multimodal systems achieve is widening fast.

Text-only systems are like trying to describe a painting using only words. You get the idea, but you miss the color, the texture, and the emotion. Multimodal AI changes this by combining inputs. It doesn't just read a caption; it sees the image. It doesn't just hear a command; it understands the tone of voice. This shift isn't just a feature update. It is a fundamental change in how machines understand context.

Understanding the Core Difference Between Modalities

To see why this matters, look at how information is processed. In a traditional text model, the input is strictly characters and tokens. If you upload a photo of a broken engine part, a text-only system sees nothing but a file name. A multimodal system processes the pixels, recognizes the crack, and cross-references it with technical manuals.

This joint analysis produces more accurate outcomes. Research from 2024 showed that combining data sources rather than analyzing them separately creates a cohesive understanding. For example, Google Gemini is a state-of-the-art multimodal model released in December 2023 that utilizes unified architectures to process virtually any input. This means it can take a video of a machine failing, listen to the sound of the grinding gears, and read the maintenance log simultaneously. Text-only models would require you to describe the sound and the video in words first, which introduces human error.

The technical architecture behind this is complex. Modern systems use unified architectures that combine different types of information into a single representation. This allows the model to generate almost any output based on mixed inputs. Benchmarks indicate a 34% higher accuracy in vision-language tasks compared to single-modality approaches. That is a massive jump in reliability for any business relying on these tools.

Real-World Impact in Critical Industries

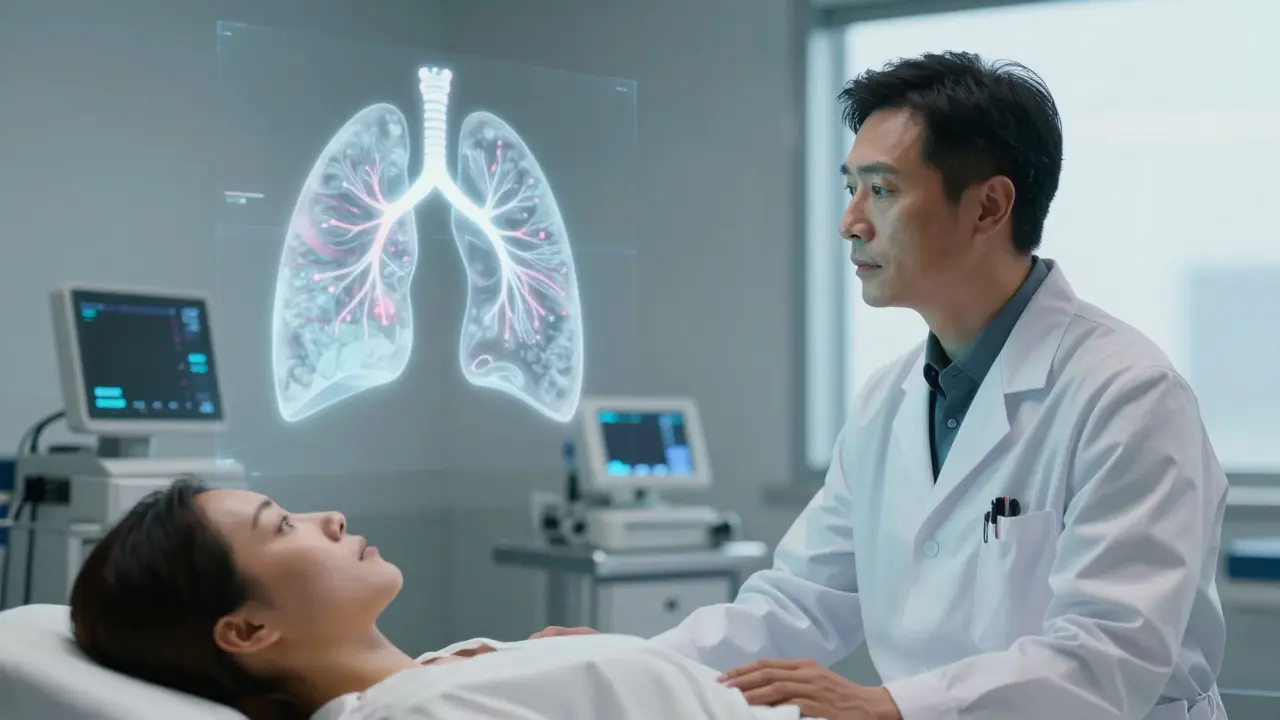

Let's look at where this technology changes the game. Healthcare is the most obvious example. A doctor doesn't diagnose a patient based on a text file alone. They look at X-rays, listen to the heartbeat, and ask questions. Multimodal systems mimic this workflow.

A study by Stanford University in 2024 found that multimodal systems reduced diagnostic errors by 37.2% when combining radiology images with patient history. Imagine a scenario where a patient records a cough on their phone. A text-only system analyzes the transcript of their symptoms. A multimodal system analyzes the audio of the cough, the video of their breathing, and their medical history. The precision in medical diagnostics is 28.7% higher when these inputs are analyzed together rather than separately.

Customer service is another area seeing rapid transformation. Text-only chatbots often fail when a customer is frustrated. They miss the tone of voice. Multimodal AI analyzes the customer's tone during calls alongside text inputs. Founderz documented a 41% improvement in resolution rates when this emotional context is included. A bank case study showed multimodal chatbots resolved 68% of complex inquiries requiring document image analysis versus 42% for text-only systems. This means faster answers and happier customers.

| Feature | Text-Only Systems | Multimodal Systems |

|---|---|---|

| Diagnostic Accuracy | Baseline | 28.7% Higher |

| Customer Resolution Rate | 42% (Complex Inquiries) | 68% (Complex Inquiries) |

| Vision-Language Tasks | Lower Accuracy | 34% Higher Accuracy |

| Processing Speed | 3.4 Seconds (Sequential) | 1.2 Seconds (Parallel) |

| Hardware Requirements | Standard GPU | High VRAM (80GB+) |

Technical Requirements and Hardware Constraints

This power comes with a price. You cannot run a high-end multimodal model on a standard laptop. Processing heterogeneous data streams requires specialized hardware. NVIDIA's research in 2024 indicated that effective multimodal training requires at least 80GB of VRAM for models handling high-resolution images alongside text. This is a significant barrier for smaller teams.

Energy consumption is another factor. Current multimodal training consumes 2.8x more energy per inference than text-only systems. As companies scale these models, the environmental cost becomes a strategic consideration. However, the efficiency gains often outweigh the costs. For instance, GPT-5 processes multimodal queries 2.8x faster than sequential single-modality approaches. You trade energy for speed and accuracy.

Latency is also a concern in low-bandwidth environments. The IEEE documented an 18-22% higher latency in these conditions. If you are deploying this in a remote clinic or a factory floor with poor internet, you need to plan for offline capabilities or edge computing. Integration capabilities are expanding, though. Google's Vertex AI Gemini API offers enterprise security features and supports data residency requirements for 47 countries. This helps meet compliance needs while handling complex data.

Challenges in Alignment and Bias

It is not all smooth sailing. Cross-modal alignment remains a tricky problem. Tredence's technical analysis identified a 12-15% accuracy drop when processing non-standard image formats compared to standard RGB inputs. If your data pipeline is messy, the model's performance suffers. You need clean, synchronized data.

Bias is another critical issue. The Partnership on AI's ethics assessment identified a 15.8% higher bias amplification rate in multimodal systems compared to text-only models when processing cultural context. This happens because images carry cultural symbols that text might not. For example, a gesture might mean something different in different regions. If the training data isn't diverse across modalities, the model will make mistakes.

Professor Gary Marcus warned in April 2025 that current systems still struggle with causal reasoning across modalities. He cited specific failures where GPT-4o misinterpreted satirical images as factual content in 23% of test cases. This means you cannot blindly trust the output. Human oversight is still required, especially in high-stakes environments.

The Future of Embodied Multimodal AI

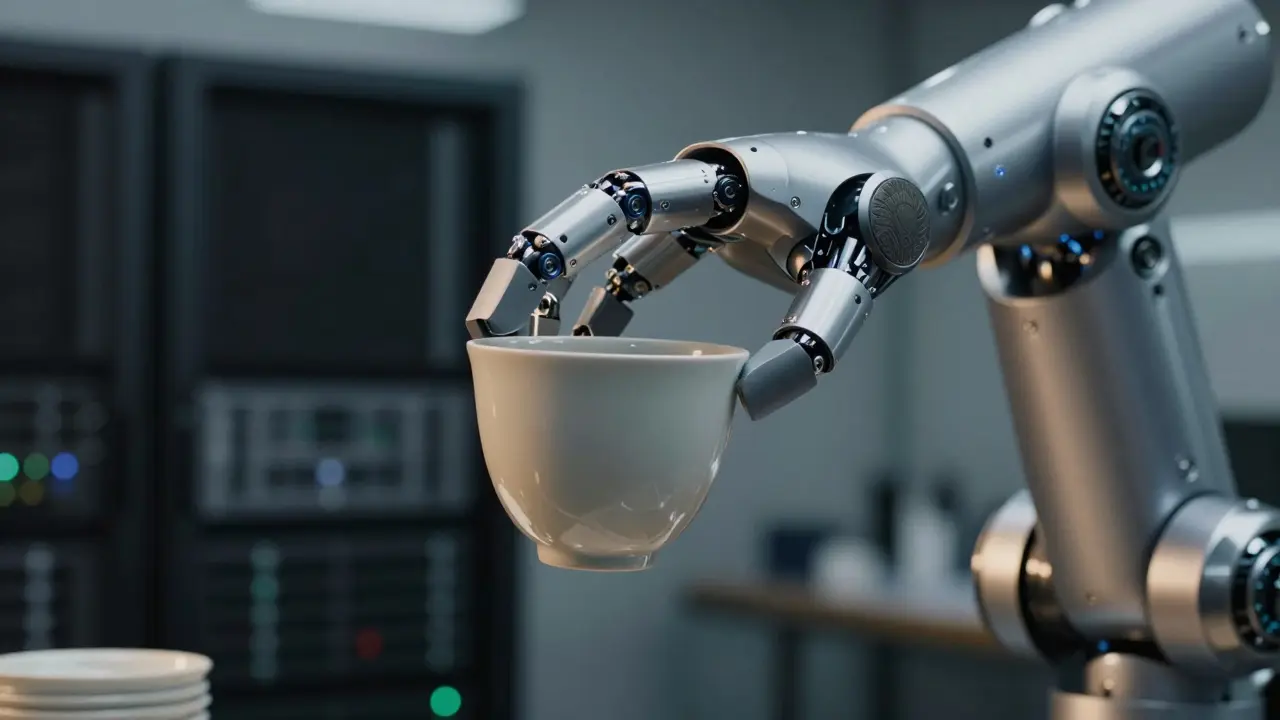

Looking ahead, the trend is moving toward embodied multimodal AI. This integrates physical sensor data. NVIDIA's Project GROOT, announced in September 2025, combines vision, audio, and tactile inputs for robotics applications. Imagine a robot that doesn't just see a cup but feels its weight and temperature to ensure it doesn't drop it.

Long-term viability appears strong. 91% of AI researchers surveyed in October 2025 predict multimodal capabilities will become standard in all generative AI systems within 3 years. The market is growing fast. Gartner's 2025 report showed the multimodal AI market reached $18.7 billion in 2024, growing at 47.3% CAGR. Healthcare, customer service, and retail are leading adoption.

Meta's June 2025 Llama 3.1 update improved non-English multimodal understanding by 38.7% across 200 languages. This global accessibility is crucial. As the technology matures, we will see fewer barriers between different types of data. The goal is truly context-aware artificial general intelligence. We are building systems that understand the world more like humans do, not just like libraries of text.

Frequently Asked Questions

What is the main advantage of multimodal AI over text-only systems?

The main advantage is the ability to capture contextual relationships across sensory inputs. Text-only systems miss visual and audio cues, leading to lower accuracy. Multimodal systems can analyze images, audio, and text together, resulting in up to 34% higher accuracy in vision-language tasks.

Do I need special hardware to run multimodal AI models?

Yes, specialized hardware is typically required. Effective multimodal training often needs at least 80GB of VRAM to handle high-resolution images alongside text. Standard consumer GPUs may struggle with the parallel processing demands of heterogeneous data streams.

How does multimodal AI improve healthcare diagnostics?

It reduces diagnostic errors by combining different data types. A Stanford University study showed a 37.2% reduction in errors when radiology images were analyzed with patient history. This is significantly better than text-only analysis of medical records.

Are there any risks associated with multimodal AI?

Yes, there are risks regarding bias and energy consumption. Multimodal systems show a 15.8% higher bias amplification rate when processing cultural context. They also consume 2.8x more energy per inference than text-only systems, which raises sustainability concerns.

Which companies are leading in multimodal AI development?

Google, OpenAI, and Meta are the primary leaders. Google holds about 32% market share, followed by OpenAI at 28%. Their models like Gemini and GPT-4o set the standard for unified architectures and cross-modal processing capabilities.

Richard H

March 25, 2026 AT 18:57We need to keep this tech domestic. Relying on foreign data pipelines is a security nightmare. American innovation drives the world forward. If we let other nations control the multimodal standards, we lose our edge. The hardware requirements are high but worth it for national security. We should be leading the charge on these unified architectures. It is not just about convenience. It is about maintaining dominance in the global tech landscape. We cannot afford to lag behind in this race. Our military and healthcare sectors need this reliability now.

Kendall Storey

March 26, 2026 AT 15:39The VRAM requirements are actually the bottleneck right now. You are looking at 80GB minimum for decent throughput. Most consumer setups just cannot handle the parallel processing demands. Inference costs spike when you add video streams to the mix. We see latency issues in low bandwidth environments too. Edge computing is the only real solution for remote deployment. The energy consumption is a massive factor for enterprise scaling. Training these models eats through power grids like nothing else. But the accuracy gains in vision-language tasks justify the expense. We are seeing thirty-four percent jumps in reliability benchmarks. It is not just a feature update. It is a fundamental architectural shift. Unified representations allow for better context understanding across modalities. Text alone misses the emotional tone in customer service calls. Audio analysis fixes that specific pain point immediately. We need to optimize the data pipelines to avoid alignment drops. Clean synchronized data is non-negotiable for performance. If the input formats are messy the model output degrades quickly. Developers need to plan for high VRAM hardware from day one.

Ashton Strong

March 28, 2026 AT 05:55The implications for healthcare diagnostics are truly remarkable. Combining radiology images with patient history reduces errors significantly. This technology offers a path toward more precise medical outcomes. It mimics the workflow of experienced physicians quite well. We should embrace these tools to support our medical professionals. The reduction in diagnostic errors provides peace of mind for patients. Accuracy improvements of nearly twenty-nine percent are substantial. It is wonderful to see such positive advancements in generative AI. These systems will likely become standard in clinical settings soon. We must ensure the data remains secure and compliant. The future of medicine looks brighter with this integration.

Steven Hanton

March 30, 2026 AT 01:01The potential for bias amplification in cultural contexts remains a significant concern for deployment.

Pamela Tanner

March 30, 2026 AT 20:29It is essential that we address the ethical considerations surrounding bias. Multimodal systems can inadvertently amplify cultural stereotypes through image processing. Diverse training data across all modalities is required to mitigate this risk. We must ensure that the technology serves everyone equitably. Clear guidelines on data synchronization will help maintain model integrity. Education on these limitations is crucial for stakeholders. We have a responsibility to guide this technology responsibly. The benefits are clear but the risks require careful management. Inclusive design principles should be at the forefront of development. We can achieve high accuracy without compromising ethical standards.

Kristina Kalolo

March 30, 2026 AT 22:40Hardware constraints are a major barrier for smaller teams trying to adopt this. Standard laptops simply cannot run high-end multimodal models efficiently. The shift to specialized GPUs is inevitable for serious applications. Energy efficiency will become a strategic consideration for scaling operations. Latency in remote areas needs offline capabilities to function correctly. Integration with enterprise security features helps meet compliance needs. The market growth suggests investment in infrastructure is necessary. We will see fewer barriers between data types as the technology matures. Context awareness is the ultimate goal for artificial general intelligence. Systems need to understand the world more like humans do.