Data Minimization Strategies for Generative AI: Collect Less, Protect More

Mar, 8 2026

Mar, 8 2026

Why collecting less data is the smartest move for generative AI

Think about how much personal data your company collects to train a chatbot or write marketing copy. Do you really need every email, chat log, and customer profile? Probably not. And that’s the problem. Generative AI doesn’t need all of it - just enough to learn the patterns. The more data you hoard, the bigger your risk. A single breach can cost millions, trigger regulatory fines, and destroy trust. The answer isn’t to stop using AI. It’s to collect less, protect more.

What data minimization really means (and what it doesn’t)

Data minimization isn’t about using tiny datasets. It’s about using the right data. The Information Policy Centre’s 2024 analysis makes this clear: if you need a million medical records to train a model that helps doctors draft notes, then collect a million. But if you’re building a customer service bot that answers billing questions, you don’t need full names, Social Security numbers, or health histories. Strip away what’s not needed. That’s minimization. It’s not a restriction - it’s a filter.

Four technical strategies that actually work

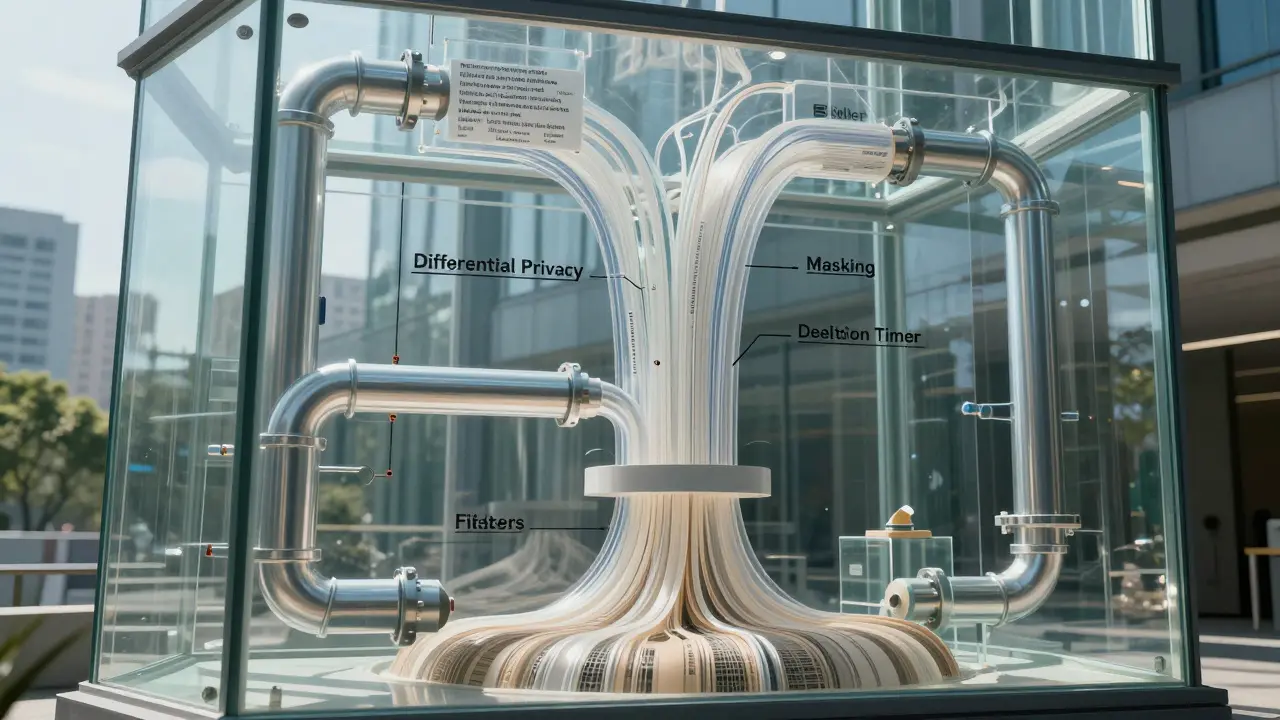

- Differential privacy: Add noise to your data. Not random noise - smart, math-based noise that hides individual details while keeping overall trends intact. Studies show this cuts data leakage risk during training by up to 60%. You still get accurate models. But no one can reverse-engineer a single person’s data from the results.

- Data masking: Use fake but realistic data during development. If your team is testing a new AI feature, give them synthetic versions of customer data. Names become “User_7291,” addresses turn into “123 Fake St.” This stops accidental leaks inside your own company. Aviso’s guidelines say this is non-negotiable for dev and QA environments.

- Anonymization through generalization: Replace exact values with ranges. Instead of storing “Age: 34,” store “Age: 30-39.” Instead of “Zip code: 97401,” store “Region: Pacific Northwest.” This reduces identifiability without losing usefulness for training.

- Synthetic data generation: Use AI to create fake data that looks real. Tools powered by generative models can replicate the statistical patterns of your real data - without ever touching it. BigID found this slashes breach risk by 75% in shared datasets. Imagine your finance team sharing fraud detection patterns with a partner - without sharing a single real transaction.

Storage limitation: The silent partner of data minimization

Collecting less is only half the battle. Holding onto data too long is just as dangerous. A customer chats with your AI in January. By March, that conversation is irrelevant. Yet it’s still sitting in your database. That’s a liability. Storage limitation means setting automatic deletion rules. Keep data only as long as needed - for training, for compliance, for audit. Then wipe it. The GDPR and similar laws don’t just say “don’t collect too much.” They say “don’t keep too long.” Make deletion part of your pipeline, not an afterthought.

Build privacy in from day one - not as an add-on

Too many teams build AI models first, then slap on privacy controls later. That’s like building a house without locks, then buying a deadbolt after a break-in. Instead, design your data pipeline with privacy baked in. Ask these questions early:

- What data are we collecting - and why?

- Can we achieve the same result with less or synthetic data?

- Do we really need to store this for more than 30 days?

- Who has access, and how is it logged?

Use these answers to shape your data ingestion, training, and storage layers. The International Association of Privacy Professionals (IAPP) says fairness and minimization go hand-in-hand. A model trained on biased or excessive data isn’t just risky - it’s unethical. Privacy isn’t a compliance checkbox. It’s part of quality control.

What happens when you skip data minimization

Organizations that ignore this principle end up with three big problems:

- Regulatory fines: The EU’s GDPR can hit you with up to 4% of global revenue for violations. The U.S. is catching up - state laws like CCPA and CPRA are already enforcing strict data handling rules.

- Reputation damage: If customers find out you stored their private messages to train a chatbot, trust evaporates. No AI feature can win that back.

- Technical debt: More data means more storage costs, slower training times, harder audits, and higher risk of accidental exposure. It’s a snowball effect.

One healthcare startup in Oregon collected full patient transcripts to train a clinical note generator. They didn’t anonymize them. When a contractor accidentally uploaded the dataset to a public GitHub repo, the breach made national news. They shut down. All because they collected too much - and didn’t know how to protect it.

Tools and frameworks to help you get started

Don’t build this from scratch. Use existing tools:

- OpenDP: An open-source library for implementing differential privacy. Free, well-documented, and backed by Harvard and MIT.

- Gretel.ai: Generates synthetic data from real datasets. Used by banks and insurers to share data without risk.

- BigID and OneTrust: Help you map what data you have, where it lives, and how long it should stay.

- GDPR and NIST AI Risk Management Framework: Both include clear guidance on data minimization as a core control.

Start small. Pick one AI project. Apply minimization to it. Measure the results. Then scale.

It’s not just legal - it’s smarter

Minimizing data doesn’t weaken your AI. It makes it leaner, faster, and more secure. You train on cleaner signals. You reduce storage costs. You avoid lawsuits. You earn trust. And in a world where every customer expects privacy, that trust is your biggest competitive edge.

Generative AI doesn’t need to know your name, your address, or your medical history to write a good email. It just needs to understand tone, structure, and intent. Focus on that. Leave the rest behind.

Does data minimization make generative AI less accurate?

No - not if done right. Accuracy comes from quality, not quantity. A model trained on 10,000 clean, relevant, anonymized records often outperforms one trained on 1 million messy, unfiltered ones. Techniques like differential privacy and synthetic data preserve patterns while removing noise and risk. The goal isn’t less data - it’s smarter data.

Can I use public data instead of collecting my own?

Sometimes, yes - but be careful. Public data isn’t always safe. Social media posts, forum threads, and scraped websites can still contain personal identifiers or sensitive information. Even if data is public, using it in AI may violate terms of service or privacy expectations. Always audit public datasets for hidden risks before training.

What’s the difference between anonymization and pseudonymization?

Anonymization removes all links to identity so re-identification is impossible. Pseudonymization replaces identifiers with fake ones (like replacing names with IDs), but the original data still exists somewhere. Under GDPR, pseudonymized data is still considered personal data. Anonymized data is not. For generative AI, aim for true anonymization - not just masking.

Do I need a data protection officer to implement this?

Not necessarily - but you do need someone accountable. If you’re handling sensitive data at scale, GDPR requires a DPO. Even if not legally required, assign ownership of data minimization to a team member. Track decisions, document policies, and audit regularly. Privacy fails when it’s someone else’s problem.

Is synthetic data good enough for training?

In most cases, yes. Synthetic data created by generative AI models replicates statistical patterns, correlations, and distributions of real data - without any real individuals involved. For tasks like fraud detection, customer segmentation, or language modeling, synthetic data performs nearly identically to real data. The only exceptions are highly unique cases, like rare medical conditions - but even then, you can augment synthetic data with minimal real samples.

How often should I audit my data practices?

At least every six months - or whenever you launch a new AI model. Data needs change. New features mean new data collection. Old datasets get reused. Regular audits catch drift before it becomes a breach. Keep logs of what data you collected, why, how long you kept it, and when it was deleted.

Jess Ciro

March 10, 2026 AT 04:17saravana kumar

March 10, 2026 AT 06:50Tamil selvan

March 12, 2026 AT 03:40Mark Brantner

March 13, 2026 AT 18:31Kate Tran

March 14, 2026 AT 21:55amber hopman

March 15, 2026 AT 22:36