Generative AI in Biotech: Molecule Generation and Lab Notebook Integration

May, 17 2026

May, 17 2026

Imagine scanning a chemical space estimated at 10^60 possible structures to find the perfect drug candidate. For decades, this was a theoretical nightmare for medicinal chemists. Today, generative artificial intelligence is turning that impossible task into a manageable workflow. The intersection of biotechnology and generative AI is not just about faster computation; it is about fundamentally changing how we discover and validate new molecules.

The traditional path to bringing a single drug to market costs approximately $2.6 billion and takes 10-15 years. Generative AI promises to slash those numbers significantly. But the technology is more than just code running on servers. It requires a seamless bridge between digital design and physical reality, specifically through the integration with electronic lab notebooks (ELNs). This article breaks down how molecule generation works, the current state of lab notebook integration, and what you need to know to implement these tools effectively in 2026.

The Evolution of Molecule Generation

The journey began around 2016-2017 with early applications of variational autoencoders (VAEs) and generative adversarial networks (GANs). A seminal moment came with Google researchers publishing "Molecular De Novo Design through Deep Reinforcement Learning" in 2016. These early models could generate valid molecular strings, but they lacked precision. They were like artists painting without knowing the anatomy of their subject.

Since 2020, the field has accelerated dramatically with the emergence of diffusion models and transformer architectures. Current state-of-the-art approaches now include techniques like PRODIGY (PROjected DIffusion for controlled Graph Generation), developed by researchers at Georgia Institute of Technology. Presented at the International Conference on Machine Learning (ICML) in July 2024, PRODIGY allows users to specify exact constraints, such as atom count and bond types. This level of control was previously unattainable with conventional diffusion approaches.

Why does this matter? Because validity rates have jumped. Diffusion models like EDM (Equivariant Diffusion for Molecules) and GCDM (Geometry-Complete Diffusion Models) now demonstrate 92% validity rates compared to 77% for traditional methods. More importantly, novelty scores are up by 30%. You are not just getting valid molecules; you are getting novel ones that human intuition might never have explored.

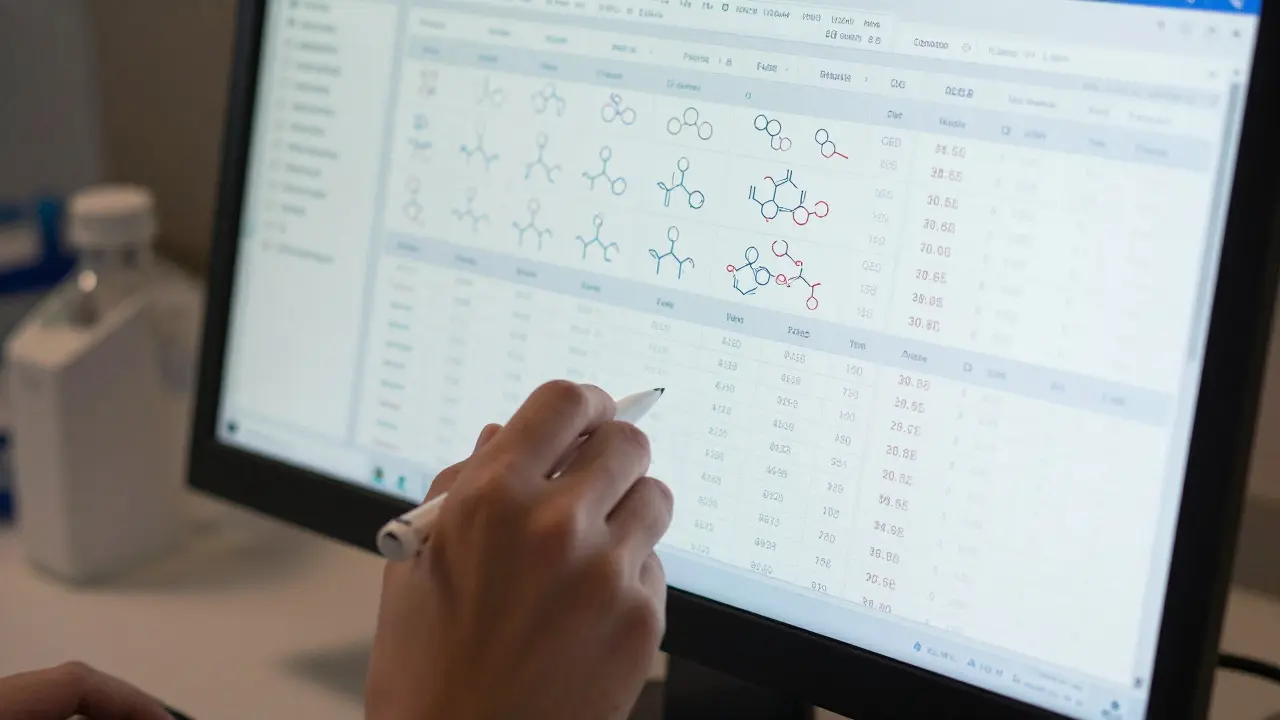

How Modern Molecule Generation Works

To understand the output, you need to look at the input. Most modern systems follow a standard workflow:

- Data Curation: Researchers use curated SMILES-based datasets containing 1-10 million molecular structures. Sources like ChEMBL or ZINC are common starting points.

- Model Training: VAEs, GANs, or diffusion models are trained on these representations. Diffusion models require 2-3x more compute than VAEs but deliver 15-20% better results in terms of validity and novelty.

- Validation: Outputs are checked against metrics like Quantitative Estimate of Drug-likeness (QED) scores. A target QED score of >0.6 is generally considered viable for candidates.

The PRODIGY system operates uniquely by projecting the generation process to meet user-specified constraints. In validation tests from July 2024, it achieved an 89% success rate for molecules meeting specific atom/bond constraints, compared to 76% for previous diffusion models. This precision is critical when designing molecules for specific protein structures, where even a slight deviation can render the compound ineffective.

| Architecture | Validity Rate | Novelty Score | Compute Requirement |

|---|---|---|---|

| SMILES-based RNNs | 79% | Baseline | Low |

| Junction Tree VAE (JTVAE) | 93% | High | Medium-High |

| GAN-based Methods | 87.6% | Medium | High |

| Diffusion Models (GCDM) | 94.2% | Very High | Very High |

The Gap Between Digital Design and Physical Reality

Here is where things get tricky. You can generate a perfect molecule on a screen, but can you synthesize it in a lab? This is known as the "synthesis gap." According to a 2023 analysis in Nature Reviews Drug Discovery, only 30-40% of AI-generated molecules prove synthesizable in laboratory conditions. Current models achieve only 65-70% accuracy in predicting synthesizability.

User feedback reflects this frustration. On Reddit’s r/bioinformatics community, 43% of negative reviews centered on synthesis failures. One organic chemist noted, "I've had 3 out of 5 AI-designed molecules fail at the synthesis stage due to unanticipated reactivity." This highlights a critical limitation: most models still operate in a 2D chemical space fantasy, while biology happens in 3D.

Professor Regina Barzilay of MIT cautioned in May 2024 that current models lack improved spatial reasoning capabilities. To bridge this gap, successful implementations use a "closed-loop" approach. This involves iterative refinement where in-silico predictions, docking simulations, and routine medicinal chemistry filters refine candidates before synthesis. Insilico Medicine reports 40% higher success rates using this methodology compared to single-pass generation.

Integrating Generative AI with Electronic Lab Notebooks

This is the emerging frontier. While molecule generation has seen substantial research, the integration with electronic lab notebooks (ELNs) remains nascent. As of late 2024, only 15% of major ELN platforms offered native generative AI capabilities. However, 67% have announced planned integrations within 18 months.

Leading platforms like Benchling (acquired by Thermo Fisher Scientific for $3.5 billion in 2022) and LabArchives are beginning to incorporate AI capabilities. The goal is to create a seamless workflow where AI-generated molecules are automatically logged, tracked, and linked to experimental results. This reduces manual data entry errors and ensures reproducibility.

Why is this integration so hard? Legacy systems were not built for AI workflows. They lack the infrastructure to handle the volume and velocity of data generated by AI models. Furthermore, regulatory considerations are evolving. The FDA released draft guidance in February 2024 acknowledging AI-generated molecules but requiring "enhanced validation data packages," which adds 3-6 months to preclinical timelines. ELNs must be able to capture this additional data rigorously.

Practical Implementation Challenges

Getting started requires significant expertise and infrastructure. The learning curve is steep, typically taking 6-12 months for computational chemists to become proficient with advanced generative frameworks. Here are the key hurdles:

- Computational Resources: Training modern diffusion models typically requires 4-8 NVIDIA A100 GPUs for 3-7 days on standard datasets. This is a barrier for many biotech startups.

- Data Scarcity: Niche therapeutic areas often have only 5,000-10,000 high-quality structures available. This requires transfer learning approaches, adding 2-3 weeks to implementation timelines.

- Documentation and Support: Open-source projects like REINVENT offer comprehensive tutorials but lack polished support. Commercial platforms provide better support but less transparency. Community support is strongest around open-source frameworks like DeepChem, which maintains active discussion forums with 200+ monthly contributions.

Adoption rates vary significantly by organization size. McKinsey’s June 2024 survey found that 87% of top 20 pharmaceutical companies have active generative AI initiatives, compared to only 32% of biotech startups. Resource constraints are the primary differentiator.

Market Context and Future Outlook

The generative AI drug discovery market was valued at $1.34 billion in 2023 and is projected to reach $12.97 billion by 2030, growing at a 38.5% CAGR. Leading players include Insilico Medicine, Recursion Pharmaceuticals, and BenevolentAI (acquired by Cognizant for $1.4 billion in October 2024).

Major pharmaceutical companies are building "AI-native" laboratory environments. Pfizer’s Cambridge facility, operational since September 2024, features automated synthesis robots that receive direct input from generative AI models. This reduces the design-make-test cycle from weeks to 72 hours. Recursion Pharmaceuticals reports 5x faster optimization cycles using similar closed-loop systems.

Analysts predict that by 2028, 40% of novel drug candidates will originate from AI-driven design processes. However, the technology's long-term viability depends on demonstrating clinical success. As of January 2025, only three AI-designed molecules had entered clinical trials: Insilico's ISM001-055 for fibrosis, Exscientia's DSP-1181 for oncology, and Generate Biomedicines' undisclosed candidate. The next few years will be crucial in proving whether AI can consistently deliver drugs that work in humans, not just in silico.

What is the biggest challenge in using generative AI for molecule generation?

The biggest challenge is the "synthesis gap." While AI can generate valid and novel molecules quickly, only 30-40% of these molecules are actually synthesizable in a lab. Current models struggle with predicting real-world reactivity and 3D spatial constraints accurately.

How do electronic lab notebooks (ELNs) integrate with generative AI?

Integration is currently nascent, with only 15% of major ELN platforms offering native AI capabilities as of late 2024. The goal is to automate the logging of AI-generated molecules, link them to experimental results, and ensure compliance with regulatory requirements for enhanced validation data packages.

Which AI architecture is best for molecule generation in 2026?

Diffusion models, particularly those like GCDM and PRODIGY, are currently leading the field. They offer higher validity rates (up to 94%) and better novelty scores compared to older VAE or GAN-based methods, though they require significantly more computational power.

Can small biotech startups afford to implement generative AI?

It is challenging. Only 32% of biotech startups have active generative AI initiatives due to resource constraints. Training models requires expensive hardware (like NVIDIA A100 GPUs) and specialized expertise. Many startups rely on cloud-based services or partnerships with larger pharma companies to access these capabilities.

What is the role of the FDA in regulating AI-generated drugs?

The FDA released draft guidance in February 2024 acknowledging AI-generated molecules. They require "enhanced validation data packages," which means more rigorous testing and documentation. This adds approximately 3-6 months to preclinical timelines but ensures safety and efficacy standards are met.