Model Compression for LLMs: Distillation, Quantization, and Pruning Guide

Apr, 18 2026

Apr, 18 2026

Imagine trying to fit a massive library of information into a pocket-sized notebook without losing the core meaning of the books. That is essentially the challenge of model compression for Large Language Models (LLMs). When GPT-3 launched with 175 billion parameters, it changed everything, but it also created a massive problem: you need a small fortune in hardware just to run it. For most of us, deploying a model like LLaMA-70B requires at least five A100 GPUs with 80GB of memory each. That is not practical for a mobile app or a lean cloud budget.

The goal here isn't just to make the model smaller, but to do it without making the AI "stupid." We want to keep the versatility and generalization-the ability to handle diverse tasks-while slashing the memory footprint. Whether you are a developer trying to run a bot on a MacBook M1 or an enterprise cutting cloud costs, understanding the trade-offs between distillation, quantization, and pruning is the only way to move from a research prototype to a real-world product.

The Quick Breakdown: Which Technique Should You Use?

Not all compression methods are created equal. Some are "plug-and-play," while others require weeks of retraining. If you need a fast win, quantization is your best bet. If you are building a specialized small model from scratch, distillation is the way to go. If you have specialized hardware and a lot of patience, pruning can offer deeper cuts.

| Method | Primary Goal | Typical Compression | Implementation Effort | Best For... |

|---|---|---|---|---|

| Quantization | Reduce numerical precision | 2x - 8x | Low (Hours/Days) | Real-time chat, Edge devices |

| Pruning | Remove redundant weights | Up to 60% sparsity | Medium/High (Weeks) | Memory-constrained hardware |

| Distillation | Transfer knowledge | Up to 7.5x | High (Weeks/Months) | High-accuracy small models |

Quantization: Slashing Precision to Boost Speed

Think of quantization as rounding numbers. In a standard model, weights are often stored as 32-bit floating points (FP32). That is a lot of precision for a computer to calculate. Quantization is the process of reducing the number of bits used to represent each weight in a neural network. By moving from FP32 to 8-bit integers (INT8) or even 4-bit, you drastically reduce the amount of VRAM needed.

There are two main ways to do this. First, there is Post-Training Quantization (PTQ). This is the "easy button" where you convert the weights after the model is already trained. It's fast and requires no retraining. Then there is Quantization-Aware Training (QAT), where the model "learns" to deal with the lower precision during the training phase, leading to much better accuracy.

In the real world, we're seeing amazing results. The llama.cpp project has shown that 4-bit quantization can lead to a 4x speedup on M1 Max chips. However, it's not a free lunch. Reducing a model to 4-bit can cause a dip in complex reasoning. For example, some users reported Llama-3-70B's MMLU score dropping from 53.2 to 47.8 after aggressive quantization. The rule of thumb? 8-bit is usually safe; 4-bit requires calibration; anything below 3-bit often leads to a "collapsed" model that talks in riddles.

Pruning: Cutting the Dead Weight

If quantization is about rounding numbers, pruning is about deleting them. Pruning is the removal of redundant or unnecessary parameters from a trained model to reduce its size. Not every connection in a neural network is useful; some are essentially doing nothing.

There are three common flavors of pruning:

- Weight Pruning: Removing individual weights with the lowest magnitude. This creates "sparse" matrices.

- Neuron Pruning: Deleting entire neurons, which is a more aggressive cut.

- Structured Pruning: Removing entire channels or layers. This is much more hardware-friendly because modern GPUs struggle with the random gaps created by unstructured pruning.

A newer approach called FLAP (Filter-Level Adaptive Pruning) uses a bias compensation mechanism to avoid the need for fine-tuning. This is a game-changer because traditional pruning often requires weeks of retraining to recover lost performance. But be warned: pruning is technically demanding. While you can quantize a model with 10 lines of code using Hugging Face's Optimum, structured pruning often requires deep architecture changes that can take weeks of engineering.

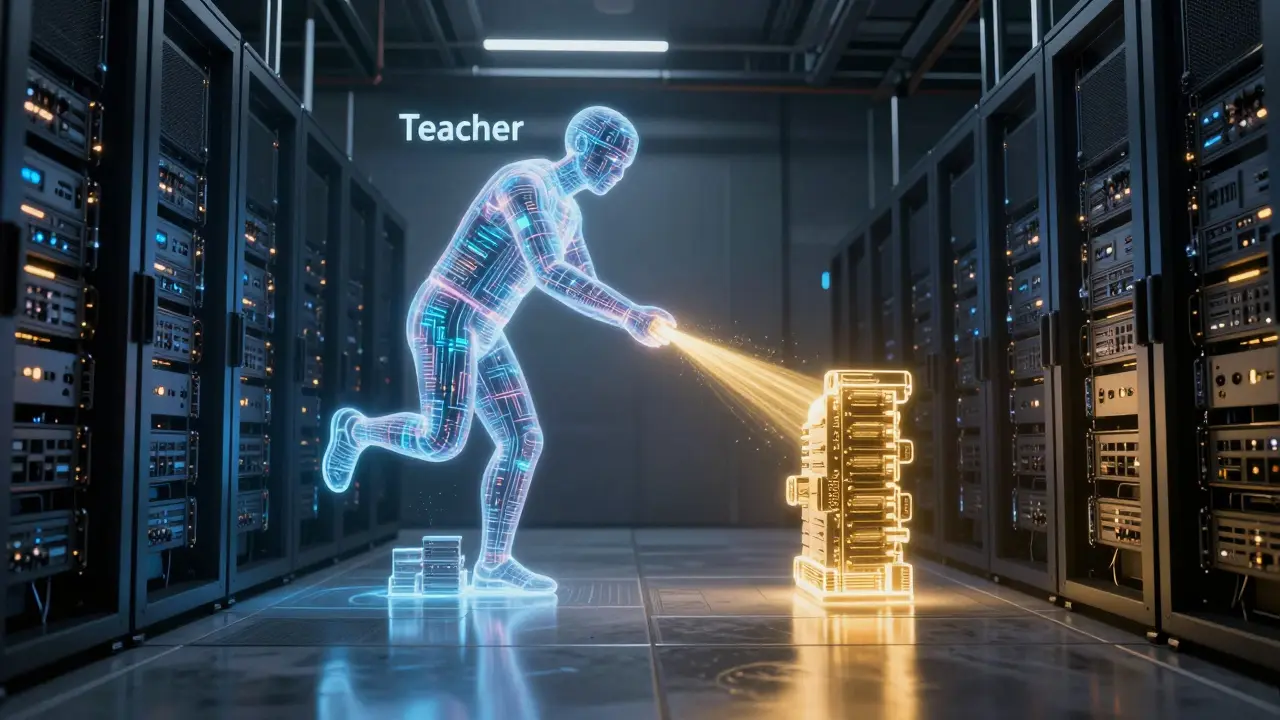

Knowledge Distillation: The Teacher and the Student

Knowledge Distillation is a different beast entirely. Instead of shrinking a model, you use a giant, high-performing "Teacher" model to train a much smaller "Student" model. Knowledge Distillation is a training technique where a small model is taught to mimic the output distributions of a larger, pre-trained model.

The student doesn't just learn the right answer; it learns the teacher's "thought process" (the probability distribution across all possible words). For instance, TinyBERT managed a 7.5x compression ratio while keeping nearly 97% of the original performance on the GLUE benchmark.

The downside? It's incredibly expensive. To create TinyLlama, the team spent three weeks training on 64 A100 GPUs. You need the full teacher model and a massive dataset. If you don't have a cluster of GPUs and a month of time, distillation is likely out of reach, but for companies building specialized edge-AI, it provides the highest quality-to-size ratio.

Other Efficient Architectures: Factorization and Sharing

Beyond the "big three," there are a few niche techniques that punch above their weight. Low-Rank Factorization uses Singular Value Decomposition (SVD) to break big weight matrices into smaller ones. It's like simplifying a complex fraction; the value stays the same, but the numbers are easier to handle.

Then there is Layer Sharing. Instead of having 24 different layers, the model reuses the same weights across multiple layers. ALBERT is a version of BERT that uses parameter sharing to drastically reduce the number of parameters. ALBERT achieved 18x fewer parameters than BERT-base while performing almost as well. These methods are often combined with quantization to push the limits of what a mobile phone can handle.

The Danger Zone: When Compression Goes Wrong

It is tempting to push compression as far as possible to save money, but there is a cliff. Research suggests that once you pass an 8x compression ratio, you hit "catastrophic performance degradation." The model doesn't just get slightly worse; it stops being able to reason entirely.

The real danger is that traditional metrics like perplexity can lie to you. Apple's research team found that perplexity often looks stable even when the model's actual ability to solve problems has plummeted. They've advocated for more rigorous benchmarks like LLM-KICK to catch these subtle failures. Moreover, there's a risk of bias amplification. When you prune a model, it tends to lose the "long tail" of knowledge-meaning it might lose the ability to support minority languages or niche cultural contexts while keeping the dominant ones.

Will quantization make my model lose its reasoning ability?

It depends on the bit-width. 8-bit (INT8) usually has a negligible impact on reasoning. However, moving to 4-bit or 3-bit can lead to noticeable drops in complex logic or coding tasks. This is why it's critical to test your compressed model on a specific benchmark (like MMLU) rather than relying on general metrics.

Do I need special hardware for pruned models?

Yes, for unstructured pruning. To actually see a speedup, you need hardware that supports sparse tensors, such as NVIDIA A100 or H100 GPUs. If you are using standard hardware, structured pruning (removing entire layers) is much more effective because it results in standard, smaller matrices.

Which is better: Distillation or Quantization?

They serve different purposes. Quantization is a post-processing step to make an existing model run faster on current hardware. Distillation is a training strategy to create a fundamentally smaller model. If you have the budget and data, distillation produces a more "intelligent" small model; if you need to deploy tomorrow, use quantization.

Can I combine these techniques?

Absolutely. A common production pipeline involves distilling a large model into a smaller student, pruning the student to remove redundancies, and finally quantizing the result to 4-bit for deployment on edge devices. This "stacked" approach maximizes efficiency.

What is the risk of "catastrophic degradation"?

This occurs when you compress a model too aggressively (typically beyond 8x). The internal representations of the model break down, leading to hallucinations, repetitive loops, or a complete inability to follow instructions.

Next Steps and Troubleshooting

If you are just starting, don't jump into pruning. Start with 8-bit quantization using the bitsandbytes library-it's the industry standard for a reason and takes minutes to set up. If the performance is still too slow, try 4-bit quantization, but be sure to run a small validation set of your actual user queries to ensure the answers are still accurate.

For those deploying in the EU, keep in mind that the EU AI Act (effective February 2026) requires you to document modifications that affect model performance. If you prune or quantize a model, keep a log of the performance delta-essentially, how much accuracy you traded for speed-to remain compliant.

Pamela Watson

April 20, 2026 AT 16:10Everyone knows that 4-bit is basically just a gamble with your accuracy! 🙄 It's so obvious that people should just use 8-bit if they actually care about the AI not being dumb. I've tried this a million times and it's just common sense to stay safe! :)

Albert Navat

April 20, 2026 AT 17:13Tell me you've never touched a quantization-aware training loop without telling me. You're just talking about the low-hanging fruit of PTQ here. The real magic happens when you optimize the gradient flow during the compression phase, not just rounding numbers like a calculator from the 80s.

Christina Kooiman

April 22, 2026 AT 11:02It is absolutely devastating and frankly an insult to the English language that some people in this thread are barely attempting to use proper capitalization or punctuation, which is honestly just a tragedy because if we cannot maintain the basic structural integrity of our sentences, then how on earth are we supposed to expect a compressed machine learning model to maintain the structural integrity of a complex logical argument without it completely falling apart into a heap of linguistic rubble and digital nonsense!

michael T

April 22, 2026 AT 15:36This whole conversation is just a dizzying whirlpool of technical misery and I can feel my brain melting just thinking about the sheer agony of trying to fit a giant brain into a tiny box. It's like trying to squeeze a whole ocean into a thimble and it's just fundamentally heartbreaking to think about all those lost parameters drifting away into the void of digital oblivion. I'm literally shaking at the thought of a model talking in riddles because some developer wanted to save a few bucks on a cloud bill, which is just a parasitic way to treat intelligence!