SLAs and Support: What Enterprises Really Need from LLM Providers in 2026

Mar, 13 2026

Mar, 13 2026

When your business depends on an LLM to answer customer questions, process medical records, or automate financial approvals, a 5-second delay or a data leak isn’t just an inconvenience-it’s a legal and financial risk. That’s why enterprise teams don’t just pick the fastest model. They demand SLAs-formal, measurable promises from their LLM provider. And in 2026, those SLAs are no longer optional add-ons. They’re the contract that keeps your AI running, secure, and compliant.

What an Enterprise LLM SLA Actually Covers

Most people think SLAs are just about uptime. They’re not. A modern enterprise SLA is a 10-page document that defines performance, security, support, and compliance-all in hard numbers.

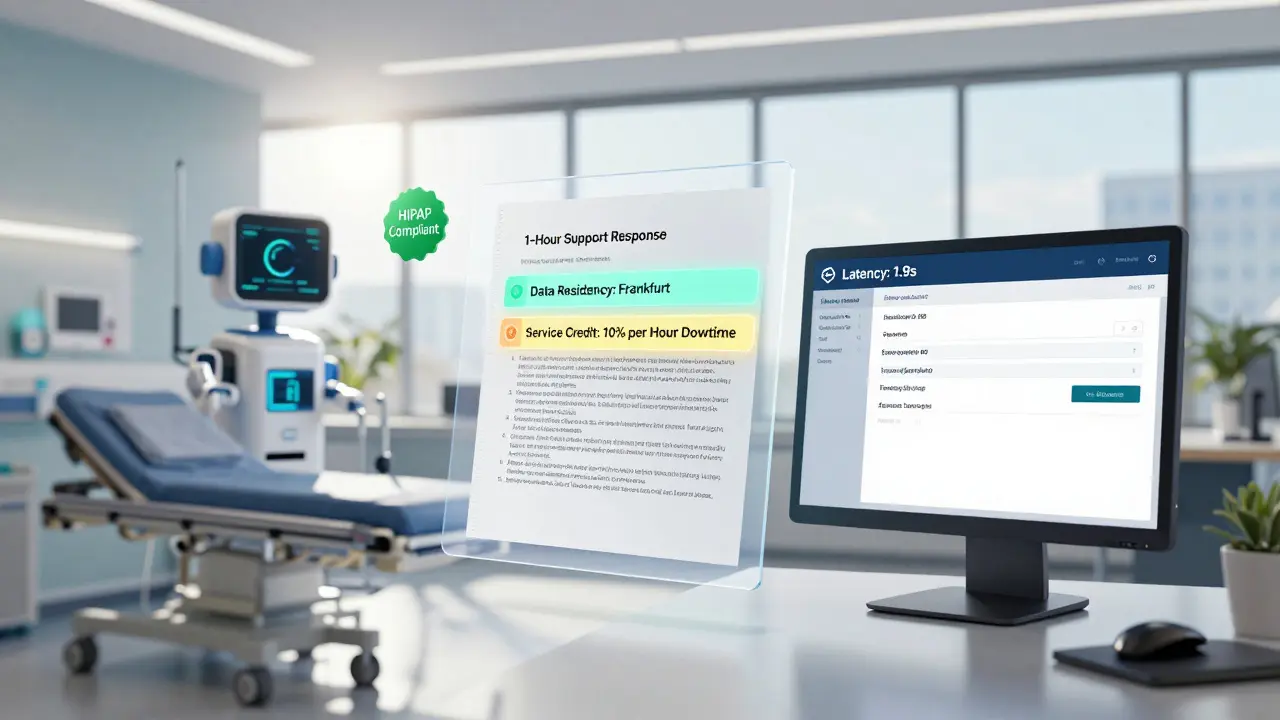

Uptime guarantees are the baseline. The standard is 99.9%, meaning no more than 43 minutes of downtime per month. But that’s not enough for healthcare or banking. Mission-critical systems now require 99.99% uptime-just 4.3 minutes of downtime per month. Providers like Microsoft Azure OpenAI and Amazon Bedrock offer this as a premium tier. If they miss it, you get a service credit: 10% of your monthly bill for every hour of downtime beyond the limit.

Latency matters just as much. If your chatbot takes longer than 3 seconds to respond 95% of the time, users abandon it. SLAs now specify exact response times under normal and peak loads. During Black Friday-level traffic, 5-7 seconds might be acceptable-but only if it’s written into the contract. Vague promises like “reasonable performance” got companies burned in 2024 when unexpected throttling hit during critical moments.

Security isn’t a feature-it’s a requirement. SLAs must include: AES-256 encryption for data at rest, TLS 1.3 for data in transit, SOC 2 Type II compliance, and industry-specific certifications like HIPAA for health data or FedRAMP High for government use. Google Cloud AI now offers real-time dashboards that show you, in real time, whether your usage meets GDPR or HIPAA rules. That’s not marketing-it’s contractual.

Data residency is non-negotiable. If your company operates in the EU, you can’t let customer data cross borders. SLAs now specify which geographic regions your data will be processed in. Google Cloud AI supports 22 regions. Microsoft and Amazon offer similar options. If your SLA doesn’t say where your data lives, you’re already in violation.

Support Isn’t Just a Phone Number

Enterprise support isn’t a chatbot that says “I’m sorry you’re having trouble.” It’s a team with a response SLA tied to severity levels.

Standard enterprise plans guarantee acknowledgment within 4 business hours. Premium tiers-usually $25,000+/month-offer 24/7 dedicated engineers with 1-hour response times. For financial institutions or hospitals, the highest tier demands 15-minute responses for Severity 1 outages. That means a phone call, not an email ticket.

But here’s what most companies miss: support doesn’t just mean fixing crashes. It means helping you navigate model upgrades, audit trails, and compliance checks. Anthropic’s SLA includes third-party verified zero data retention. That’s not a feature-it’s a legal shield. If you’re audited for HIPAA, and your provider says “we don’t store your prompts,” and you have audit logs to prove it? That’s worth millions in avoided fines.

And weekends? Holidays? Many SLAs still say “business hours” without defining them. A 2025 Aloa review found that 43% of SLA disputes came from unclear support windows. Ask for a written definition of “business hours.” If they can’t give it, walk away.

Model Versioning: The Hidden Time Bomb

Imagine your AI system runs on GPT-4-turbo. One day, the provider retires it. No warning. Your app breaks. Your customers complain. Your team scrambles to retrain models on GPT-5-only to find it’s 30% slower and 50% more expensive.

This happened to three mid-sized banks in 2024. And none of them had a versioning clause in their SLA.

Enterprise SLAs must now include a commitment: “We will keep model version X.Y.Z available for at least 12 months after its release.” Some providers, like Azure OpenAI, offer 18-24 months. Others? They change models without notice. Gartner’s David Groom says this is the most overlooked SLA component. If you’re running mission-critical workflows, demand version retention in writing. No exceptions.

Who’s Leading in 2026? The Real Comparison

Not all LLM providers are built the same. Here’s how the top four stack up:

| Provider | Uptime SLA | Key Compliance Certifications | Model Version Retention | Support Response Time (Premium) | Data Residency |

|---|---|---|---|---|---|

| Microsoft Azure OpenAI | 99.9% (standard), 99.95% (premium) | FedRAMP High, HIPAA, GDPR, SOC 2, DoD IL4/IL5 | 18-24 months | 1 hour | 20+ regions |

| Amazon Bedrock | 99.9% (standard) | SOC 2, GDPR, HIPAA (limited) | 12 months | 1 hour | 15+ regions |

| Google Vertex AI | 99.9% (standard) | GDPR, HIPAA, SOC 2 | 12 months | 2 hours | 22 regions |

| Anthropic (Claude 4) | 99.9% (standard) | Zero data retention (audited), GDPR | 12 months | 1 hour | 10 regions (expanding) |

Microsoft leads in compliance. If you’re in healthcare or government, Azure OpenAI is often the only viable option. Amazon Bedrock wins on cost and model choice-you can switch between 60+ models without changing your API. Google offers the strongest multimodal performance, but its SLA documentation is the weakest. Anthropic? If data privacy is your top concern, their zero retention policy is unmatched. But they’re still catching up on regional coverage.

The Hidden Costs No One Talks About

Your contract says $10,000/month. But the real cost? Often 20-40% higher.

Why? Because SLAs force you to build extra infrastructure. You need:

- Dedicated GPU clusters to avoid shared resource throttling

- Advanced observability tools to monitor uptime, latency, and compliance simultaneously

- Legal teams to audit SLA language and negotiate penalties

- Security teams to enforce encryption and access controls

A 2025 Helicone.ai survey of 87 enterprises found the average implementation team required 2.5 full-time equivalents just to manage the SLA. That’s not included in the provider’s price tag. And if you’re not tracking it? You’re underbudgeting.

What You Must Demand Before Signing

Here’s a checklist every enterprise team should run before signing an LLM contract:

- Is uptime guaranteed with clear penalties? (Not “best efforts”)

- Is latency measured under real-world load? (Not lab conditions)

- Does the SLA specify exact data residency locations?

- Are compliance certifications listed and verifiable?

- Is model version retention defined? (Minimum 12 months)

- Is support response time defined for weekends/holidays?

- Is there a clear, written process for claiming service credits?

- Does the provider offer real-time compliance dashboards?

- Have you tested their API under 300% of expected peak load?

If even one answer is “we’ll figure it out,” walk away. You’re not buying a tool. You’re buying operational certainty.

Why This Matters More Than the Model

It’s 2026. The difference between GPT-4 and Claude 4 is shrinking. What’s growing? The gap between providers who treat SLAs as marketing fluff-and those who treat them as the core of their product.

The EU AI Act is now in force. Regulators are auditing AI systems. Insurance companies are demanding proof of uptime and data handling. Investors are asking for SLA documentation before funding.

LLMs aren’t just code. They’re legal liabilities. And the provider who gives you a 99.9% uptime guarantee without explaining how they’ll handle a data breach? They’re not helping you. They’re setting you up to fail.

The smartest enterprises don’t ask, “Which model is fastest?” They ask: “What happens when this breaks-and who’s on the line to fix it?”

Do all LLM providers offer SLAs?

No. Open-source models like Llama 3 or Mistral don’t come with SLAs. Commercial providers like Microsoft, Amazon, Google, and Anthropic do-but the quality varies. Many startups offer SLAs as optional upsells. Enterprise clients should avoid providers who don’t include SLAs as a standard part of their contract. If they can’t define uptime, latency, or support response times in writing, they’re not ready for production use.

Can I negotiate my own SLA terms?

Yes, if you’re spending over $100,000 per year. Major providers allow customization for strategic accounts. Common negotiated terms include extended model retention, custom data residency zones, dedicated infrastructure, and higher uptime guarantees. But don’t expect flexibility if you’re on a $5,000/month plan. SLA negotiation is a privilege for high-volume users.

What’s the biggest mistake companies make with LLM SLAs?

They focus only on uptime. The real risks are data leakage, model drift, and compliance violations. A 99.9% uptime SLA means nothing if your prompts are being stored, your model is suddenly upgraded without warning, or you can’t prove you’re following HIPAA during an audit. SLAs must cover security, data handling, and version control-not just availability.

How do I verify if a provider is meeting their SLA?

You need observability tools. Providers like Helicone.ai, Logz.io, and AWS CloudWatch offer dashboards that track API response times, error rates, and compliance metrics in real time. Some, like Google Vertex AI, even provide automated audit logs. Don’t rely on provider reports. Set up your own monitoring and cross-check it monthly. If your tools show 99.7% uptime but the provider claims 99.9%, you have grounds for a service credit claim.

Are SLAs worth the extra cost?

Absolutely-if you’re using LLMs for customer-facing, regulated, or high-value operations. Gartner estimates the average cost of AI downtime in regulated industries is $5,600 per minute. A $10,000/month SLA with 99.99% uptime and zero data retention could prevent a single $1M fine. The ROI isn’t theoretical. It’s documented in healthcare, finance, and government contracts from 2024-2025.

What Comes Next?

By 2027, providers who don’t offer transparent, multi-dimensional SLAs will lose market share. The winners? Those who treat SLAs as a competitive advantage-not a checkbox.

Microsoft’s new “SLA 2.0” uses AI to predict outages before they happen. Google is rolling out real-time compliance dashboards. Anthropic is expanding data residency. These aren’t upgrades. They’re strategic shifts.

If your LLM provider still uses vague language like “reasonable efforts” or “best available performance,” they’re still in 2023. The enterprise market moved on. You should too.

TIARA SUKMA UTAMA

March 14, 2026 AT 16:08Jasmine Oey

March 15, 2026 AT 10:13Marissa Martin

March 16, 2026 AT 09:17James Winter

March 17, 2026 AT 03:55Aimee Quenneville

March 18, 2026 AT 10:50Cynthia Lamont

March 19, 2026 AT 22:33Kirk Doherty

March 20, 2026 AT 19:35Dmitriy Fedoseff

March 21, 2026 AT 16:54Meghan O'Connor

March 23, 2026 AT 02:38