Task-Specific Prompt Blueprints for Search, Summarization, and Q&A

Apr, 20 2026

Apr, 20 2026

Stop treating your LLM prompts like magic spells. If you're still writing a new prompt from scratch every time you want a different result, you're wasting time and risking inconsistent outputs. The real secret to scaling AI applications isn't just "better wording"-it's the use of prompt engineering via standardized blueprints. Think of a blueprint not as a single prompt, but as a reusable architectural frame that ensures your AI behaves exactly the same way, whether you're using GPT-4o, Claude 3.5, or a local Llama model.

The core problem with ad-hoc prompting is provider lock-in. If you spend weeks perfecting a prompt for one specific model, you're stuck when a faster or cheaper model hits the market. A Prompt Blueprint is a standardized framework for designing natural language inputs that shields your application from provider-specific implementation details. It turns prompting from an art into a repeatable process.

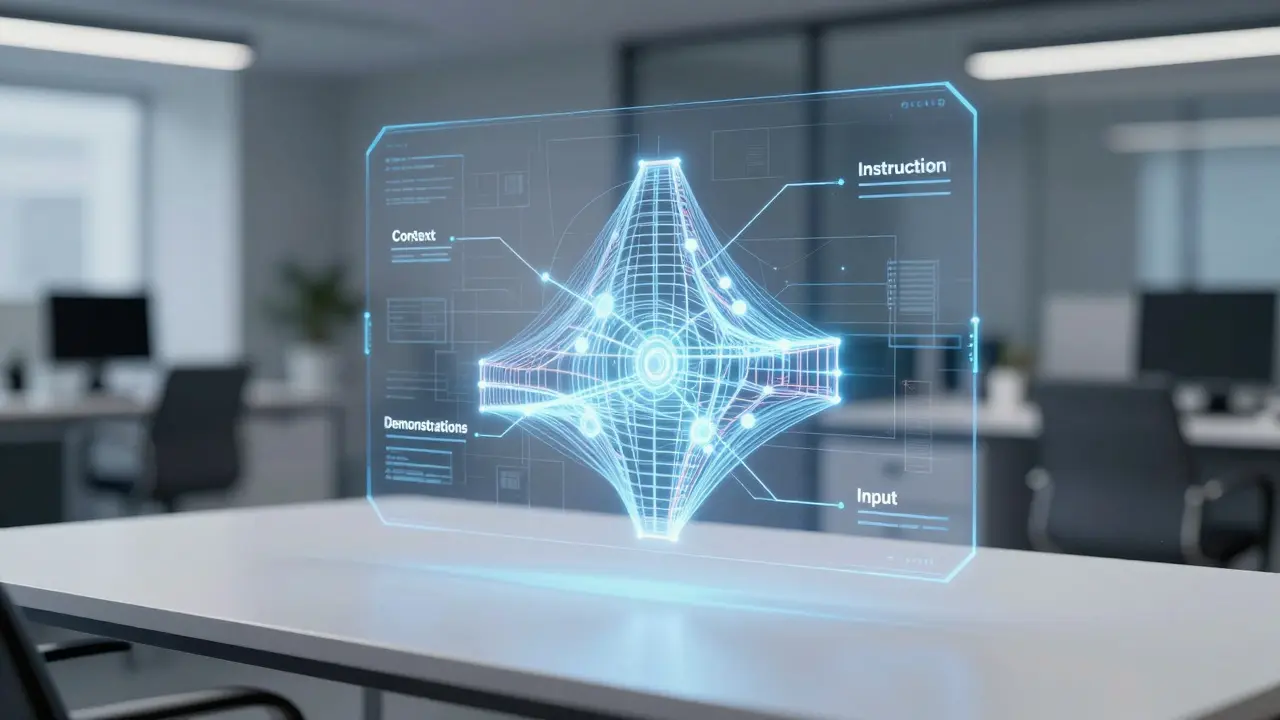

The Core Anatomy of a Blueprint

Before diving into specific tasks, you need to understand what actually goes into a high-performing blueprint. A raw prompt is just text; a blueprint is a structured object. To get professional results, your blueprints should always include these four pillars:

- The Instruction: A clear, direct command of what the model needs to do.

- The Context: Domain-specific data or background information (e.g., "You are analyzing medical journals from 2025").

- Demonstrations: Few-shot examples that show the model exactly how the output should look.

- The Input: The actual user query or data being processed.

By separating these elements, you can swap the "Context" or "Input" without breaking the overall logic of the prompt. This is how enterprise-grade AI apps maintain stability.

Blueprints for Intelligent Search and Retrieval

Search isn't just about finding keywords; it's about relevance and intent. When you build a blueprint for search, you aren't just asking for a list of links. You're designing a system for information retrieval.

The most effective search blueprints use JSON Schema to define how the LLM should interact with tools. Instead of telling the AI to "search for X," the blueprint defines a tool with specific parameters. For example, if a user asks for "the best laptops under $1000," the blueprint forces the LLM to extract "price_max: 1000" and "category: laptops" into a structured format before the search even happens.

This approach removes the "guessing game." By standardizing the tool call, you ensure that the search query sent to your database is precise, leading to much higher accuracy in the results presented to the end user.

Mastering Summarization with Domain Tailoring

Most people just tell an AI to "summarize this text," and they get a generic, boring paragraph. If you want a summary that actually adds value, you need domain-specific tailoring. A summary for a legal contract looks nothing like a summary for a medical trial or a product review.

For high-stakes summaries, consider a technique called Active Prompting. Instead of one shot, the blueprint triggers the model to generate multiple versions of the summary with intermediate reasoning steps. The system then estimates the model's uncertainty. If the model is unsure about a specific detail, the blueprint can be tuned to prioritize accuracy over brevity.

| Domain | Blueprint Focus | Key Attribute | Desired Outcome |

|---|---|---|---|

| Legal | Clause Extraction | Precision | Zero-loss of critical terms |

| Medical | Symptom/Result Mapping | Accuracy | Clinical validity |

| Business | Executive Highlights | Brevity | Actionable insights |

Precision Q&A using Chain-of-Thought

When it comes to Question and Answering (Q&A), the biggest enemy is "hallucination." LLMs often jump to the wrong conclusion because they try to answer the whole problem at once. The fix is Chain-of-Thought (CoT) Prompting, which is a method that provides the model with a sequence of intermediate reasoning steps to reach a final answer.

You don't need a complex setup to trigger this. Simple phrases like "Let's think step by step" can drastically improve performance. In actual benchmarks, using a step-by-step blueprint improved accuracy on mathematical datasets like GSM8K from around 40% to over 43%. It sounds small, but in a production environment, a 3% jump in accuracy can mean thousands of fewer customer support tickets.

For even better results, use Expert Prompting. Instead of just saying "be a helpful assistant," your blueprint should instruct the AI to adopt a specific identity. For instance, "You are a Senior Cloud Architect with 20 years of experience in AWS." This shifts the model's internal probability weights toward professional, technical language and away from generic conversational filler.

Practical Implementation: The Blueprint Workflow

How do you actually move from a text prompt to a blueprint? Follow this workflow:

- Identify the Job: Is this a search task, a summary, or a complex Q&A?

- Define the Schema: If the task requires tools (like search), define the JSON parameters first.

- Build the Few-Shot Set: Create 3-5 examples of "Input $\rightarrow$ Reasoning $\rightarrow$ Output."

- Test Across Models: Run the same blueprint through OpenAI and Anthropic. If the results vary wildly, tighten your instructions.

- Version Control: Treat your blueprints like code. Save them in a registry (like PromptLayer) so you can roll back if a new model update breaks your logic.

One pro tip: Always show the model what to do rather than what not to do. Telling a model "don't be wordy" is less effective than providing an example of a concise response and saying "follow this style."

What is the difference between a prompt and a prompt blueprint?

A prompt is a single, often ad-hoc input given to an AI. A prompt blueprint is a structured template that separates instructions, context, and examples from the actual user input. This allows the same logical framework to be reused across different users and different LLM providers without rewriting the core logic.

Does Chain-of-Thought work for every task?

Not necessarily. CoT is incredible for reasoning, math, and complex Q&A where the path to the answer matters. However, for simple classification or sentiment analysis, CoT can actually increase latency and token costs without providing any meaningful boost in accuracy.

How do I stop my summarization blueprints from missing key details?

Use domain-specific tailoring and Active Prompting. Instead of a general summary request, specify exactly which entities or data points must be extracted. Providing a few-shot example of a "perfect" summary for your specific industry helps the model understand what counts as a "key detail."

Can prompt blueprints help with LLM costs?

Yes, indirectly. By using a standardized blueprint, you can more easily identify where prompts are too long or where you can switch to a smaller, cheaper model for simple tasks. It also prevents the need for expensive fine-tuning in many cases, as high-quality few-shot blueprints can often match fine-tuned performance.

Why use JSON Schema in search blueprints?

JSON Schema provides a strict contract between the LLM and your backend. It ensures the AI extracts the correct parameters (like date ranges or product IDs) in a format your code can actually use, eliminating the risk of the AI returning a conversational sentence when your system expects a structured query.