Vision-Language Applications: Using Multimodal LLMs to See and Reason

Apr, 19 2026

Apr, 19 2026

The Engine Under the Hood: How These Models Work

Not all multimodal models are built the same. Depending on whether you need raw speed or pinpoint accuracy, the architecture changes. There are three main ways these systems handle the marriage of pixels and words. First, there is the decoupled architecture (often called NVLM-D). This approach uses specialized pathways for images. If you're doing heavy-duty document processing or OCR, this is your best bet, as it typically hits 5-8% higher accuracy in text-heavy images. The trade-off? It's a resource hog, requiring about 25-30% more compute power than leaner models. Then there's the cross-attention approach (NVLM-X). This method integrates visual features directly into the language model's attention mechanism. It's significantly more efficient-roughly 15-20% better for high-resolution images-making it a favorite for satellite imagery or medical scans. However, it can struggle slightly with the fine details of a dense text document. Finally, the hybrid approach (NVLM-H) tries to give you the best of both worlds, balancing the precision of decoupled paths with the speed of cross-attention. Most general-purpose applications lean toward this hybrid style to keep costs down without sacrificing too much quality.| Architecture Type | Best Use Case | Key Strength | Main Drawback |

|---|---|---|---|

| Decoupled (NVLM-D) | Document OCR / Invoices | Highest text accuracy | High compute cost |

| Cross-Attention (NVLM-X) | Satellite / Medical Imaging | Computational efficiency | Lower OCR precision |

| Hybrid (NVLM-H) | General Purpose AI | Balanced performance | Average across all metrics |

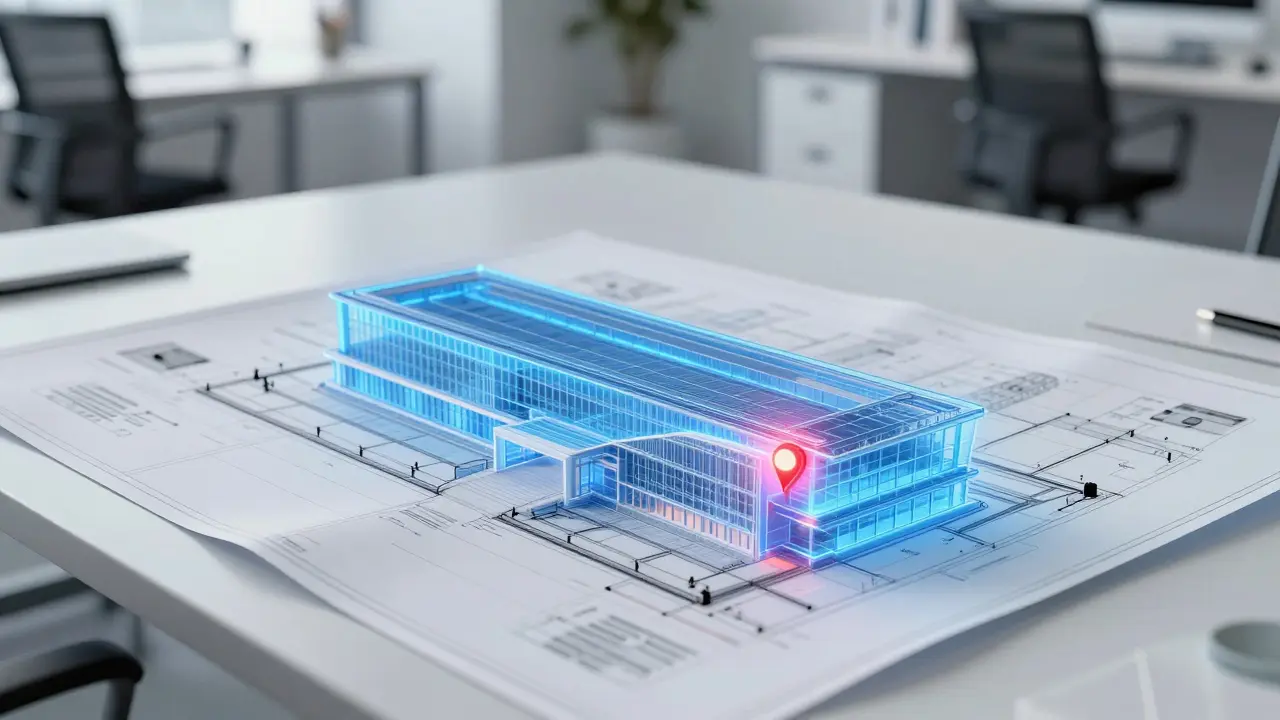

Real-World Applications and the Open-Source Surge

We've reached a point where open-source models are no longer just "cheap alternatives"-they are genuine competitors. GLM-4.6V is a high-performance open-source multimodal model released by Z.ai that competes with proprietary systems in visual reasoning and OCR. It can process nearly 2,500 tokens per second on a single NVIDIA A100 GPU. In some benchmarks, it actually beats proprietary giants like Gemini-1.5-Pro while using 40% fewer vision tokens. Where are these models actually being used?- Financial Services: About 42% of firms are using these for automated document processing. Instead of manually checking receipts, the VLM extracts data and flags anomalies instantly.

- Healthcare: 31% of organizations use them for medical imaging analysis. A model can highlight a potential lesion in an X-ray and provide a descriptive summary for the doctor to review.

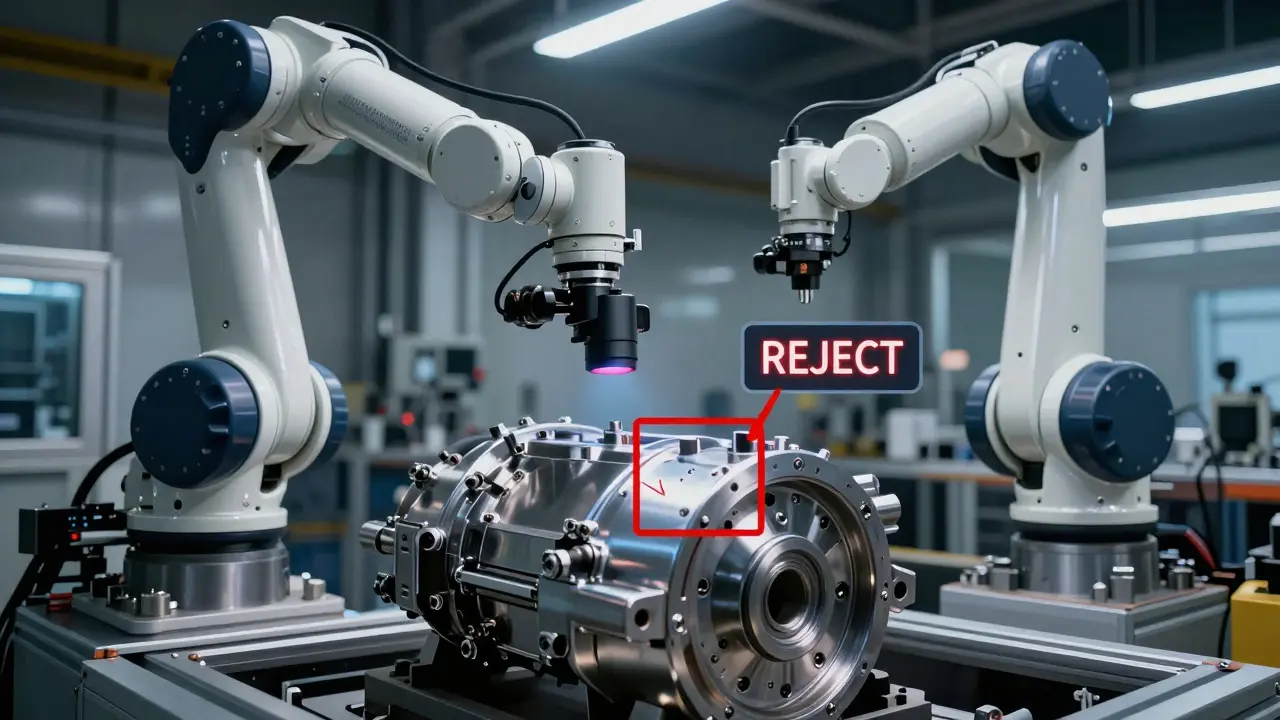

- Manufacturing: 28% of companies use VLMs for quality control. A camera on the assembly line identifies a scratch on a part, and the model triggers a rejection alert.

- Embodied AI: This is the next frontier. By using specialized architectures like Janus, which separates "understanding" from "generation," researchers have improved robotics command interpretation by 18%.

The Price of Power: Implementation Challenges

If this sounds like magic, the reality of deployment is a bit more grounded. Running a top-tier open-source model with over 70 billion parameters isn't something you do on a laptop. You need serious hardware-think NVIDIA A100s or H100s-and the deployment costs can hit $12,000 to $15,000 in GPU resources just to scale. One of the biggest headaches for developers is "token bloat." While models like GLM-4.6V boast a 128K context window, high-resolution images eat up that space rapidly. It's common to find that a few detailed images consume 80% of your available memory before you've even typed a sentence. To fix this, about 68% of successful enterprise deployments use vision token compression techniques to shrink the visual data without losing the core meaning. There's also the issue of "multimodal hallucinations." The AI might confidently tell you there's a cat in a photo when there's actually just a very cat-shaped cloud. Current benchmarks show that hallucination rates are 8-12% higher in multimodal models than in text-only versions. This makes them risky for high-stakes environments, like medical record digitization, where accuracy can drop from 97% to 82% when dealing with messy handwriting.

Moving Toward Specialized Intelligence

We are moving away from the "one model does everything" era. The trend for 2026 is hyper-specialization. Experts predict that 60% of new models will focus on a single niche, like legal document analysis or autonomous drone navigation. We're also seeing a massive push toward efficiency. The goal is to reduce vision token requirements by 50%, allowing these models to run on smaller, cheaper hardware. This is critical because training a single 70B-parameter VLM can consume roughly 1,200 MWh of electricity-a staggering environmental and financial cost. As these systems become more integrated, they aren't just tools we call via an API; they are becoming the "eyes" of the enterprise. From the EU AI Act requiring transparency in how these models make decisions to the rise of native tool use in robotics, the integration of vision and language is fundamentally changing how we interact with machines.What is the difference between a standard LLM and a VLM?

A standard Large Language Model (LLM) only processes text. A Vision-Language Model (VLM) can "see" by processing visual data (images or video) and text simultaneously. Instead of just reading a description of a chart, a VLM can look at the chart itself and answer questions about the data trends it sees.

Are open-source multimodal models as good as GPT-5 or Gemini?

In specific areas like OCR and document reasoning, open-source models like GLM-4.6V are now competitive and sometimes even superior, often using fewer resources. However, proprietary models still generally lead in complex, long-form video understanding, where they maintain a 12-15% accuracy advantage on benchmarks like VideoMME.

Why do images take up so much of the context window?

Images are broken down into "patches" or tokens. High-resolution images require more patches to maintain detail, which consumes a large portion of the model's memory (the context window). This is why developers use token compression to reduce the visual footprint without losing essential information.

What is 'Embodied AI' in the context of VLMs?

Embodied AI refers to AI that has a physical body, like a robot. VLMs enable this by allowing the robot to interpret visual commands (e.g., "pick up the red cup") and reason about the environment in real-time, bridging the gap between digital intelligence and physical action.

How can I reduce hallucinations in a vision-language application?

The most effective way is to use instruction-tuned backbones rather than base models, which improves alignment with human expectations. Additionally, implementing modality-specific preprocessing pipelines can help the model focus on the most relevant parts of an image, reducing the chance of the AI "imagining" details that aren't there.