Category: AI & Machine Learning - Page 2

Ethical Futures for Generative AI: Ensuring Equitable Access and Global Impact

Generative AI is transforming the world-but only if we ensure equitable access and ethical use. This article explores bias, IP rights, global access, and accountability to build AI that works for everyone.

Read MoreScheduling Strategies to Maximize LLM Utilization During Scaling

Smart scheduling can boost LLM throughput by 3.7x and cut costs by 87%. Learn how continuous batching, sequence prediction, and token budgeting unlock GPU efficiency at scale.

Read MoreNLP Pipelines vs End-to-End LLMs: When to Use Modular Systems vs Prompt-Based Models

NLP pipelines and end-to-end LLMs aren't rivals-they're partners. Learn when to use each, how they compare in cost and accuracy, and why the smartest systems combine both for speed, precision, and scalability.

Read MoreEnterprise Integration of Vibe Coding: Embedding AI into Existing Toolchains

Enterprise vibe coding embeds AI into development workflows to cut time-to-market by 40% while maintaining security and compliance. Learn how top companies are using it to build internal tools, modernize legacy systems, and empower developers-not replace them.

Read MoreCalibrating Confidence in Non-English Large Language Model Outputs

LLMs often overconfidently answer in non-English languages because they’re trained mostly on English data. Without proper calibration, their confidence scores don’t match real accuracy-putting users at risk in healthcare, legal, and customer service scenarios.

Read MoreRotary Position Embeddings and ALiBi: How Modern LLMs Handle Position Without Learned Embeddings

Rotary Position Embeddings and ALiBi are the two leading methods modern LLMs use to handle sequence position without learned embeddings. They enable longer context, better extrapolation, and faster training-replacing old positional encoding techniques entirely.

Read MoreTransfer Learning in NLP: How Pretraining Enabled Large Language Model Breakthroughs

Transfer learning in NLP lets models reuse knowledge from massive text datasets to perform new tasks with minimal data. Pretrained models like BERT and GPT-3 revolutionized the field by making advanced language AI accessible to everyone.

Read MoreSLAs and Support: What Enterprises Really Need from LLM Providers in 2026

In 2026, enterprise LLM adoption hinges on SLAs that guarantee uptime, security, compliance, and support-not just model performance. Learn what real contracts include and which providers deliver.

Read MorePrompt-Tuning vs Prefix-Tuning: Lightweight Techniques for LLM Control

Prompt tuning and prefix tuning let you adapt large language models with minimal training. Learn how they differ, when to use each, and why neither can replace full fine-tuning for complex tasks.

Read MoreBias in Large Language Models: Sources, Measurement, and How to Fix It

Large language models carry hidden biases that affect decisions in hiring, healthcare, and law. Learn where bias comes from, how to measure it, and what’s being done to fix it by 2026.

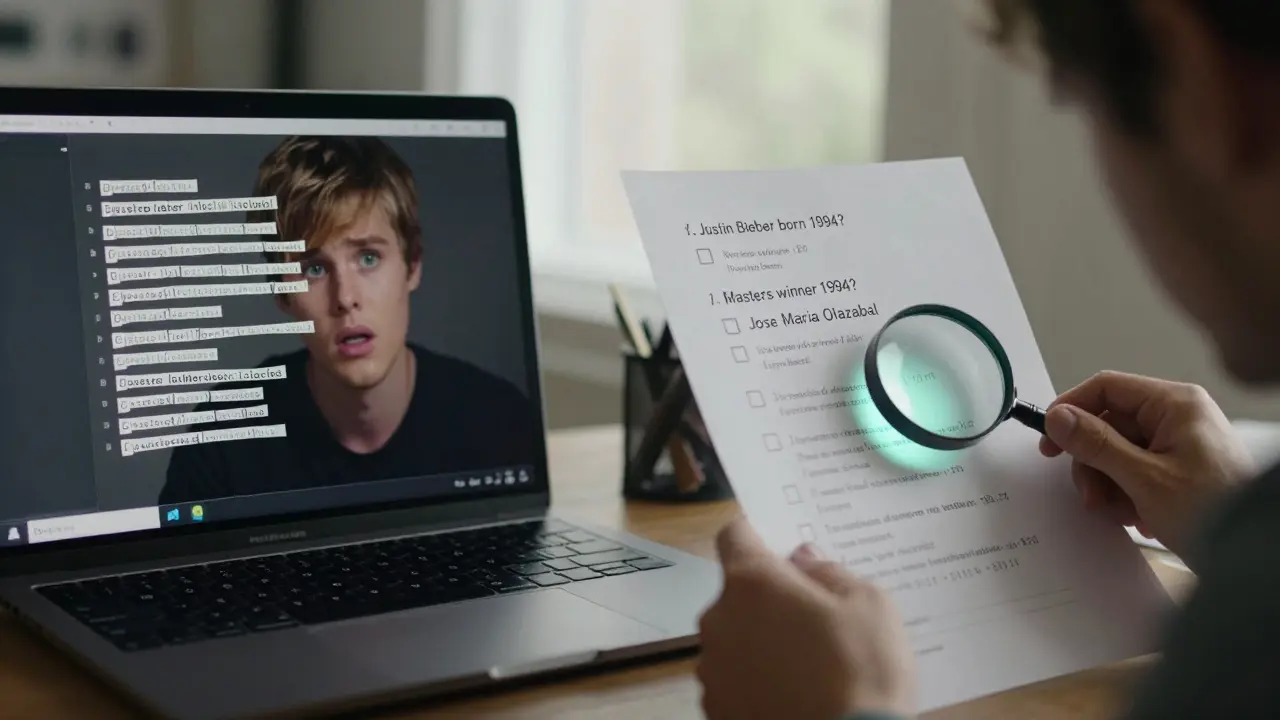

Read MoreSelf-Ask and Decomposition Prompts for Complex LLM Questions

Self-Ask and decomposition prompting improve LLM accuracy on complex questions by breaking them into visible, verifiable steps. Used in legal, medical, and financial AI, they boost accuracy by up to 14% over standard methods - but require careful implementation.

Read MoreCalibration and Outlier Handling in Quantized LLMs: How to Preserve Accuracy at 4-Bit Precision

Learn how calibration and outlier handling preserve accuracy in 4-bit quantized LLMs. Discover which techniques-AWQ, SmoothQuant, GPTQ-deliver real-world performance and avoid the pitfalls that cause 50% accuracy drops.

Read More